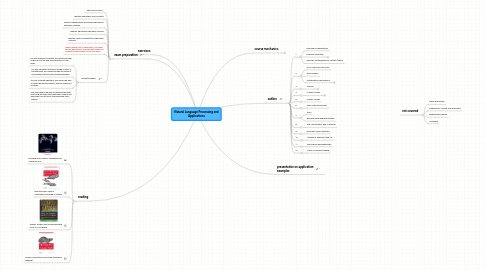

1. exam preparation

1.1. after each lecture...

1.2. identify definitions and concepts

1.3. identify mathematical proofs and derivations that were covered

1.4. identify algorithms that were covered

1.5. identify major concepts/tools that were covered

1.6. make a written list of definitions, concepts, proofs, derivations, and algorithms that you studied and bring them to the oral exam

1.7. during the exam

1.7.1. For any definition/concept, you should be able to give a concise and clear definition in the exam

1.7.2. For any derivation that we covered in class or the exercises, you should be able to repeat it in the exam and also solve similar problems.

1.7.3. For any covered algorithm, you should be able to state the inputs/outputs, and the sequence of steps.

1.7.4. You also need to be able to demonstrate that you know the tools that have been used in the exercises (UNIX/POSIX command line tools, Python)

2. reading

2.1. Manning and Schütze: Foundations of Statistical NLP

2.2. Bird and Klein: Natural Language Processing in Python

2.3. Perkins: Python Text Processing with NLTK 2.0 Cookbook

2.4. Russell: Mining the Social Web (additional reading)

3. exercises

3.1. required for taking the oral exam

3.2. bring the completed exercises with you to the oral exam (otherwise you can't take the exam)

3.3. Exercises are mostly in Python, with some theory.

3.4. Do your exercises in iPython notebooks and hand them in.

3.5. Many exercises will give you a Python notebook as a starting point.

4. outline

4.1. 1

4.1.1. overview of applications

4.1.2. linguistic essentials

4.1.2.1. history of language

4.1.2.2. language variation

4.1.2.3. basic grammatical concepts

4.1.3. exercise: review grammar, refresh Python

4.2. 2

4.2.1. UNIX command line tools

4.2.2. some Python

4.2.3. mathematical foundations

4.2.3.1. probability theory

4.2.3.2. common distributions

4.2.3.3. Bayesian methods

4.2.3.4. information theory

4.3. 3

4.3.1. corpora

4.3.1.1. what are corpora and how are they used?

4.3.1.2. what corpora are available?

4.3.1.3. tokenization, lemmatization, stemming

4.3.1.4. markup, tagging

4.4. 4

4.4.1. n-gram models

4.4.1.1. collocations

4.4.1.2. statistical models

4.4.1.3. sparsity, smoothing, back-off

4.4.1.4. cross-validation and testing

4.5. 5

4.5.1. markov models

4.6. 6

4.6.1. finite state transducers

4.7. 7

4.7.1. CRFs

4.7.2. discriminative language models

4.8. 8

4.8.1. text classification and clustering

4.9. 9

4.9.1. recurrent neural networks

4.10. 10

4.10.1. statistical alignment and MT

4.11. 11

4.11.1. word sense disambiguation

4.12. 12

4.12.1. parts-of-speech tagging

5. not covered

5.1. lexical acquisition

5.2. probabilistic context free grammars

5.3. probabilistic parsing

5.4. clustering

6. course mechanics

6.1. lectures: Room 48-462 Time Wednesdays, 1:45 - 3:15 pm

6.2. exercises: TBD

6.3. exam: oral

6.3.1. make appointments with secretary@iupr.com

6.4. office hours

6.4.1. course assistants

6.4.1.1. TBD

6.4.2. professor

6.4.2.1. make appointment with secretary@iupr.com