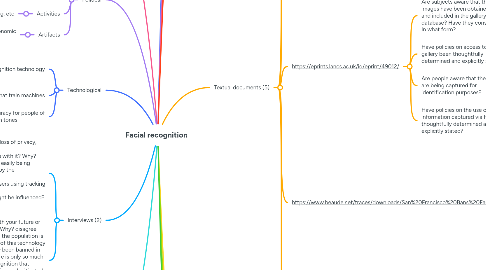

1. Professional/Epistemological

1.1. Stakeholders

1.1.1. Law enforcement

1.1.2. Medical

1.1.3. Homeless shelters

1.2. Artifacts

1.2.1. Security cameras

1.2.2. Driver's license

1.3. Activities

1.3.1. Airport checks

2. Political

2.1. Stakeholders

2.1.1. Lawmakers

2.1.2. Proponents of surveillance

2.1.3. citizens with privacy concerns

2.2. Activities

2.2.1. lobbying, voting, etc

2.3. Artifacts

2.3.1. companies that hold economic power

3. Technological

3.1. facial recognition technology

3.1.1. biometric data

3.1.2. smartphones

3.1.2.1. apps

3.2. datasets that train machines

3.3. camera accuracy for people of different skin tones

4. Interviews (2)

4.1. What does it make them think about? the loss of privacy, capitalism's hold on society Do they agree with your future or disagree with it? Why? agree because i can see facial recognition easily being abused, especially for money and control by the government What can they relate to the most? advertisers using tracking to enhance personalized ads What other stakeholders do they think might be influenced? the government

4.2. Do they agree with your future or disagree with it? Why? disagree because much of the population is already skeptical of this technology and it has already been banned in one city, and there is only so much bias in facial recognition that cannot be identified and mitigated What other stakeholders do they think might be influenced? governers, mayors, citizens, parents, lawyers

5. Metaphors (3)

5.1. Facial recognition is like a collar

5.1.1. highlights dehumanist element of tracking identity, excludes possibility of informed consent

5.2. Facial recognition is like a name tag

5.2.1. highlights insurance that you are safe and recognized, excludes lack of autonomy in non-self-identification

5.3. Facial recognition is like a fingerprint

5.3.1. highlights the fact that it can be used to bring criminals to justice, excludes the fact that it may wrongly identify similar looking individuals whereas fingerprints are unique

6. Economic

6.1. Incentive for companies to keep algorithms private

6.2. Facial data can be sold and anaylized

6.2.1. Potentially leads to targeted advertising

7. Identity/Bodily

7.1. Less likely to correctly identify women of color than white men

7.2. Technology subject to racial bias based on phenotypical features

7.3. Subjects lose personal security by being surveyed without consent

7.4. Could help identify genetic conditions

8. Educational

8.1. Stakeholders

8.1.1. Students

8.1.1.1. Parents

8.1.2. Teachers

8.1.3. Security

8.2. Activities

8.2.1. Classroom settings

8.2.1.1. Monitoring behavior, facial expressions

8.2.2. Traveling to and from school

8.3. Artifacts

8.3.1. Cameras

8.3.2. Student records

9. Context

9.1. Preexisting racial biases and power structures are reflected in new machine learning technology

9.1.1. Datasets focus on white males, who are the primary developers of this technology

10. Textual documents (5)

10.1. https://www.tandfonline.com/doi/full/10.1080/17439884.2020.1686014

10.1.1. "Facial recognition technology is now being introduced across various aspects of public life. This includes the burgeoning integration of facial recognition and facial detection into compulsory schooling to address issues such as campus security, automated registration and student emotion detection."

10.1.2. "the likelihood of facial recognition technology altering the nature of schools and schooling along divisive, authoritarian and oppressive lines"

10.1.2.1. "even if these identifications are rendered more technically accurate, it can be argued that sorting students into socially constructed racialised and/or gendered categories remains a discriminatory practice – conflating biological characteristics with social attributes. The development of facial recognition technology has helped resuscitate long-debunked race ‘science’ by attempting to formalise phenotypic differences"

10.1.3. "schools are being co-opted as sites for the normalisation of what is a ‘societally dangerous’ technology – what Stark (2019, 55) describes as a ‘facial privacy loss leader’"

10.2. https://pceinc.org/wp-content/uploads/2019/11/20190528-Facial-Recognition-Article-3.pdf

10.2.1. "FRT has the potential to be a useful tool in crime fighting by identifying criminals who are captured on surveillance footage, locating wanted fugitives in a crowd, or spotting terrorists as they enter the country."

10.2.2. "More recently, facial recognition was used by Baltimore police to monitor protesters during the unrest and rioting after the death of Freddie Gray, leading to the apprehension and arrest of protestors that had outstanding warrants."

10.3. https://eprints.lancs.ac.uk/id/eprint/49012/

10.3.1. Are subjects aware that their images have been obtained for and included in the gallery database? Have they consented? In what form?

10.3.2. Have policies on access to the gallery been thoughtfully determined and explicitly stated?

10.3.3. Are people aware that their images are being captured for identification purposes?

10.3.4. Have policies on the use of information captured via FRT been thoughtfully determined and explicitly stated?

10.4. https://www.beaude.net/traces/downloads/San%20Francisco%20Bans%20Facial%20Recognition%20Technology%20-%20The%20New%20York%20Times.pdf

10.4.1. “provides government with unprecedented power to track people going about their daily lives. That’s incompatible with a healthy democracy.”

10.4.2. Mr. Cagle and others said that a worst-case scenario already exists in China, where facial recognition is used to keep close tabs on the Uighurs, a largely Muslim minority, and is being integrated into a national digital panopticon system powered by roughly 200 million surveillance cameras

10.5. https://www.ncbi.nlm.nih.gov/pmc/articles/PMC6634990/

10.5.1. Machine learning techniques, in which a computer program is trained on a large data set to recognize patterns and generates its own algorithms on the basis of learning,8 have already been used to assist in diagnosing a patient with a rare genetic disorder that had not been identified after years of clinical effort.

10.5.1.1. if FRT is used to monitor compliance, track patients’ whereabouts, or assist in other kinds of surveillance, patients’ trust in physicians could be eroded, undermining the therapeutic alliance.

10.5.2. As FRT is increasingly utilized in health care settings, informed consent will need to be obtained not only for collecting and storing patients’ images but also for the specific purposes for which those images might be analyzed by FRT systems.

10.5.3. FRT diagnostics might not work as well for some racial or ethnic groups as others.

10.5.4. an FRT system used to identify gay men from a set of photos that may have simply identified the kind of grooming and dress habits stereotypically associated with gay men.

10.5.5. Employers might also be interested in using FRT tools to predict mood or behavior as well as to predict longevity, particularly for use in wellness programs to lower employers’ health care costs.

11. Visual representations (5)

11.1. https://cdn.gcn.com/media/img/cd/2021/12/13/facialdriverslicense/route-fifty-lead-image.png?1639385036

11.1.1. driver's id tracking

11.2. https://i.dailymail.co.uk/1s/2019/07/23/10/16381526-7275897-image-a-6_1563873646372.jpg

11.2.1. racial disparity in facial tracking

11.3. https://www.frontiersin.org/files/Articles/145042/fpsyg-06-00761-HTML/image_m/fpsyg-06-00761-g001.jpg

11.3.1. ability to detect emotions

11.4. https://mf.b37mrtl.ru/files/news/29/0e/c0/00/faces.si.jpg

11.4.1. detection of genetic disorders

11.5. https://www.eenewseurope.com/sites-old/default/files/images/01-picture-library/eenews/2017/2017-06-26-eenews-jh-nec2.png

11.5.1. can be used to track people in a crowd

12. Quantified data (3)

12.1. https://sitn.hms.harvard.edu/flash/2020/racial-discrimination-in-face-recognition-technology/

12.1.1. white and male focused society leads to discrepency

12.2. https://www.pewresearch.org/internet/2019/09/05/more-than-half-of-u-s-adults-trust-law-enforcement-to-use-facial-recognition-responsibly/

12.2.1. culture of US to place trust in police and law enforcement

12.2.2. working class is less inclined to trust landlords or supervisors

12.2.3. advertisements hold negative sentiment

12.3. https://www.oracle.com/webfolder/s/delivery_production/docs/FY16h1/doc31/Hotels-2025-v5a.pdf

12.3.1. a slight majority favor the convenience of unlocking hotel rooms with facial recognition