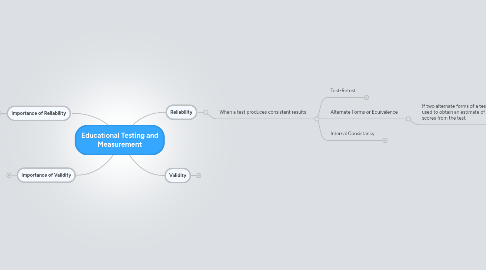

1. Reliability

1.1. When a test produces consistent results

1.1.1. Test-Retest

1.1.1.1. Method of estimating reliability

1.1.1.2. Test given twice

1.1.1.3. Correlation between first set of scores and second set of scores is determined

1.1.2. Alternate Forms or Equivalence

1.1.2.1. If two alternate forms of a test, these forms can be used to obtain an estimate of the reliability of the scores from the test.

1.1.3. Internal Consistancy

1.1.3.1. Split-half methods

1.1.3.1.1. Each item assigned to one half or the other

1.1.3.1.2. Total score on each half is determined and the correlation between the two total scores is computed

1.1.3.2. Kuder-Richardson methods

1.1.3.2.1. Measure the extent to which items within one form of the test have as much in common with one another as do the items in that one form with corresponding items in an equivalent form

2. Validity

2.1. When a test measures what it was designed to measure

2.1.1. Content

2.1.1.1. Assessed by symmetrically comparing a test item with instructional objectives to see if they match

2.1.2. Criterion related

2.1.2.1. Established by correlating test scores with an external standard

2.1.2.1.1. Concurrent

2.1.2.1.2. Predictive

2.1.3. Construct

2.1.3.1. Determined by finding whether test results correspond with scores on other variables as predicted by some rationale or theory