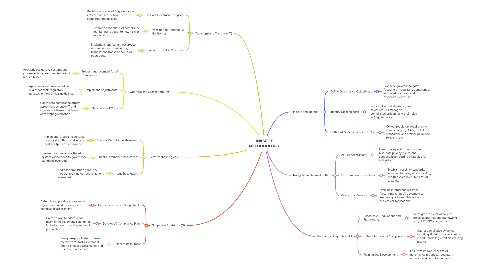

1. Initiate and Identify

1.1. Define Governance Objectives::

1.1.1. Clarify AI governance goals, focusing on regulatory compliance, ethical AI practices, and responsible data use.

1.2. Identify Stakeholders:

1.2.1. Pinpoint key stakeholders (data scientists, risk managers, compliance officers) and their roles in AI governance.

1.3. Gather AI-Specific Requirements:

1.3.1. Collect regulatory requirements, standards (e.g., GDPR, IEEE AI standards), and ethical guidelines relevant to AI.

2. Navigate and Normalize (N)

2.1. AI Risk Assessment:

2.1.1. Identify AI-specific risks such as bias, data privacy issues, and accountability, setting strategies for mitigation.

2.2. Set Compliance Baselines:

2.2.1. Establish baseline standards, including fairness, accountability, and transparency criteria for AI systems.

2.3. Contextual Analysis:

2.3.1. Examine internal and external factors impacting AI governance, like data quality, regulatory trends, and ethical implications.

3. Knowledge Acquisition (K)

3.1. Research AI Regulations and Frameworks:

3.1.1. Investigate relevant AI policies, ethical guidelines, and frameworks (e.g., NIST, ISO standards).

3.2. Data Collection for Compliance:

3.2.1. Gather compliance evidence, including algorithm transparency reports, audit logs, and bias testing results.

3.3. Training and Development:

3.3.1. Ensure team awareness of AI governance practices, regulatory updates, and compliance tools.

4. Transform and Transition (T)

4.1. Evaluate Governance Impact:

4.1.1. Review outcomes of AI governance efforts to ensure adherence to standards and policies.

4.2. Transition to Continuous Monitoring:

4.2.1. Establish continuous AI compliance monitoring to adapt to new risks or regulations.

4.3. Sustain Ethical AI Practices:

4.3.1. Implement long-term measures to ensure ongoing compliance, fairness, and transparency in AI operations.

5. Optimize and Orchestrate (O)

5.1. Process Improvement for AI Compliance:

5.1.1. Regularly evaluate AI systems and processes to refine compliance and reduce biases.

5.2. Implement Adjustments:

5.2.1. Apply improvements based on audit feedback, regulatory updates, or new ethical guidelines.

5.3. Synchronize Efforts

5.3.1. Coordinate compliance efforts across data science, IT, and compliance teams to achieve consistent governance.

6. Perform and Progress (P)

6.1. Execute Compliance Measures:

6.1.1. Implement AI-specific controls, monitor algorithms, and assess model outputs for compliance.

6.2. Track Governance Milestones:

6.2.1. Use metrics to evaluate the AI system’s adherence to governance standards over time.

6.3. Issue Resolution:

6.3.1. Address compliance gaps in AI models, applying corrective actions as needed.

7. Scope and Strategize (S)

7.1. Define Governance Scope for AI

7.1.1. Determine applicable AI processes, algorithms, and data sources for compliance assessment.

7.2. Develop AI Compliance Plan:

7.2.1. Create a plan to address and maintain AI governance standards, including control testing and audit preparation.

7.3. Allocate Resources:

7.3.1. Assign responsibilities to team members for tasks like model audits, impact assessments, and risk documentation.