1. Natural Language Processing has made significant strides in recent years, transforming how we interact with technology.

1.1. However, as with any powerful tool, it comes with ethical challenges, particularly concerning bias and fairness.

2. Bias

2.1. In the context of NLP, this entails representational and allocational harms.

2.1.1. Sources of Bias in the Development and Deployment Life Cycle

2.1.1.1. Training Data

2.1.1.1.1. Often reflects societal biases.

2.1.1.2. Algorithmic Bias

2.1.1.2.1. Some machine learning algorithms might inherently favor certain outcomes over others.

2.1.1.3. Deployment Context

2.1.1.3.1. The environment where an NLP model is deployed can introduce biases, particularly if the model is not adapted to the specific cultural or demographic context.

2.1.2. Sources of Bias in NLP tasks

2.1.2.1. Text Generation

2.1.2.1.1. Bias may appear locally or globally. Global bias is a property of an entire span of text, such as sentiment of several generated phrases.

2.1.2.2. Machine Transaltion

2.1.2.2.1. May default to masculine words in the case of ambiguity a form of an exlusionary norm.

2.1.2.3. Information Retrieval

2.1.2.3.1. Retrieved documents may exhibit similar exclusionary norms as machine translation models.

2.1.2.4. Question-Answering

2.1.2.4.1. May rely on stereotypes to answer questions in ambiguous context.

2.1.2.5. Natural Language Inference

2.1.2.5.1. A model may rely on misrepresentations or stereotypes to make invalid inferences.

2.1.2.6. Classification

2.1.2.6.1. Toxicity detection models misclassify African-American English tweets as negative more often that those written in Standard American English.

3. Bias Mesures

3.1. Bias evaluations in NLP typically have been categorized broadly into intrinsic or extrinsic evaluations based on whether they:

3.1.1. Measure biased associations within the word embedding spaces.

3.1.2. Or biased decisions from models for specific tasks.

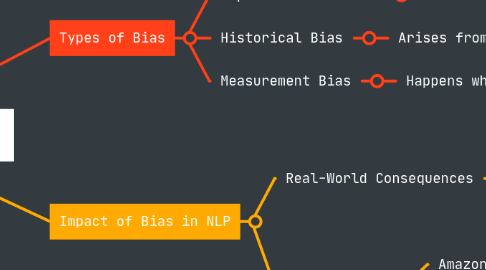

4. Types of Bias

4.1. Representation Bias

4.1.1. Occurs when certain groups are underrepresented or misrepresented in the training data.

4.2. Historical Bias

4.2.1. Arises from biases in historical data.

4.3. Measurement Bias

4.3.1. Happens when the data collection methods introduce bias, such as biased survey questions.

5. Impact of Bias in NLP

5.1. Real-World Consequences

5.1.1. Bias in NLP systems can have significant real-world impacts, including:

5.1.1.1. Discrimintation

5.1.1.1.1. Biased models can lead to discriminatory practices, such as unfair hiring processes or biased customer service responses.

5.1.1.2. Misinformation

5.1.1.2.1. Biases can skew the information provided by NLP systems, leading to the spread of misinformation.

5.1.1.3. Reinforcement of Stereotypes

5.1.1.3.1. NLP models can perpetuate harmful stereotypes, influencing societal perceptions and attitudes.

5.2. Case Studies

5.2.1. Amazon's Hiring Tool

5.2.1.1. Amazon scrapped an AI hiring tool that showed bias against women, illustrating how biased training data can lead to discriminatory outcomes.

5.2.2. Google Translate

5.2.2.1. Google Translate has faced criticism for gender biases, such as defaulting to masculine pronouns for certain professions.

6. Addresing Bias and Ensuring Fairness

6.1. Fainess

6.1.1. Is at the heart of the problem but most prior work chooses to ignore it.

6.2. Data Collection and Preprocessing

6.2.1. Diverse Datasets

6.2.1.1. This helps ensure that various perspectives and experiences are included.

6.2.2. Data Augmentation

6.2.2.1. In order to balance underrepresented groups in the dataset

6.2.3. Algorithmic Solutions

6.2.3.1. Fairness-Aware Algorithms

6.2.3.1.1. Develop and use algorithms designed to mitigate bias. Techniques like adversarial debiasing can help reduce biases in model outputs.

6.2.3.2. Regular Audits

6.2.3.2.1. Conduct regular audits of NLP models to identify and address biases.

7. Ethical Frameworks and Guidelines

7.1. Transparency

7.1.1. Maintain transparency in the development and deployment of NLP systems. Clearly communicate how models work and how decisions are made.

7.2. Accountability

7.2.1. Establish accountability mechanisms to ensure that developers and organisations are responsible for the ethical implications of their NLP systems.

7.3. Ethical Training

7.3.1. Provide training for developers and stakeholders on ethical considerations in NLP

8. Continuous Improvement

8.1. Regular Audits

8.1.1. Regularly audit model predictions to identify and address any emerging biases.

8.2. User Feedback

8.2.1. Incorporate user feedback to improve the model's fairness and accuracy over time.