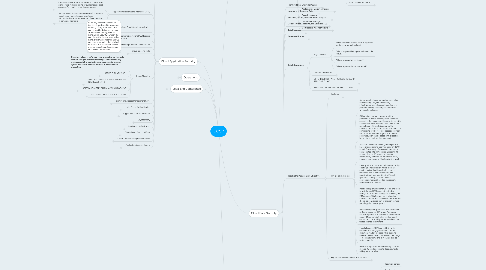

1. Cloud Application Security

1.1. Determining Data Sensitivity and Importance

1.1.1. Independence and the ability to present a true and accurate account of information types along with the requirements for confidentiality, integrity, and availability may be the difference between a successful project and a failure.

1.1.2. “CLOUD-FRIENDLINESS” QUESTIONS: What would the impact be if

1.1.2.1. The information/data became widely public and widely distributed (including crossing geographic boundaries)?

1.1.2.2. An employee of the cloud provider accessed the application?

1.1.2.3. The process or function was manipulated by an outsider?

1.1.2.4. The process or function failed to provide expected results?

1.1.2.5. The information/data were unexpectedly changed?

1.1.2.6. The application was unavailable for a period of time?

1.2. Application Programming Interfaces (APIs)

1.2.1. Representational State Transfer (REST): A software architecture style consisting of guidelines and best practices for creating scalable web service

1.2.1.1. Uses simple HTTP protocol

1.2.1.2. Supports many different data formats like JSON, XML, YAML, etc.

1.2.1.3. Performance and scalability are good and uses caching

1.2.1.4. Widely used

1.2.2. Simple Object Access Protocol (SOAP): A protocol specification for exchanging structured information in the implementation of web services in computer networks

1.2.2.1. Uses SOAP envelope and then HTTP (or FTP/SMTP, etc.) to transfer the data

1.2.2.2. Only supports XML format

1.2.2.3. Slower performance, scalability can be complex, and caching is not possible

1.2.2.4. Used where REST is not possible, provides WS-* features

1.3. Common Pitfalls of Cloud Security Application Deployment

1.3.1. ON-PREMISE DOES NOT ALWAYS TRANSFER (AND VICE VERSA)

1.3.1.1. Present performance and functionality may not be transferable. Current configurations and applications may be hard to replicate on or through cloud services.

1.3.1.1.1. First, they were not developed with cloud-based services in mind. The continued evolution and expansion of cloud-based service offerings looks to enhance previous technologies and development, not always maintaining support for more historical development and systems.

1.3.1.1.2. Second, not all applications can be “forklifted” to the cloud. Forklifting an application is the process of migrating an entire application the way it runs in a traditional infrastructure with minimal code changes.

1.3.2. NOT ALL APPS ARE “CLOUD-READY”

1.3.2.1. Business critical systems were developed, tested, and assessed in on-premise or traditional environments to a level where confidentiality and integrity have been verified and assured. Many high-end applications come with distinct security and regulatory restrictions or rely on legacy coding projects.

1.3.3. LACK OF TRAINING AND AWARENESS

1.3.3.1. New development techniques and approaches require training and a willingness to utilize new services.

1.3.4. DOCUMENTATION AND GUIDELINES (OR LACK THEREOF)

1.3.4.1. Developers have to follow relevant documentation, guidelines, methodologies, processes, and lifecycles in order to reduce opportunities for unnecessary or heightened risk to be introduced. Disconnect between some providers and developers on how to utilize, integrate, or meet vendor requirements for development might exist

1.3.5. COMPLEXITIES OF INTEGRATION

1.3.5.1. When developers and operational resources do not have open or unrestricted access to supporting components and services, integration can be complicated, particularly where the cloud provider manages infrastructure, applications, and integration platforms.

1.3.5.2. From a troubleshooting perspective, it can prove difficult to track or collect events and transactions across interdependent or underlying components. In an effort to reduce these complexities, where possible (and available), the cloud provider’s API should be used.

1.3.6. OVERARCHING CHALLENGES

1.3.6.1. developers must keep in mind two key risks associated with applications that run in the cloud

1.3.6.1.1. Multi-tenancy

1.3.6.1.2. Third-party administrators

1.3.6.2. developers must understand the security requirements based on the

1.3.6.2.1. Deployment model (public, private, community, hybrid) that the application will run in

1.3.6.2.2. Service model (IaaS, PaaS, or SaaS)

1.3.6.3. developers must be aware that metrics will always be required

1.3.6.3.1. cloud-based applications may have a higher reliance on metrics than internal applications to supply visibility into who is accessing the application and the actions they are performing.

1.3.6.4. developers must be aware of encryption dependencies for

1.3.6.4.1. Encryption of data at rest

1.3.6.4.2. Encryption of data in transit

1.3.6.4.3. Data masking (or data obfuscation)

1.4. Software Development Lifecycle (SDLC) Process for a Cloud Environment

1.4.1. SDLC PROCESS MODELS PHASES

1.4.1.1. 1. Planning and requirements analysis: Business (functional and non-functional), quality-assurance and security requirements and standards are being determined and risks associated with the project are being identified. This phase is the main focus of the project managers and stakeholders.

1.4.1.2. 2.Defining: The defining phase is meant to clearly define and document the product requirements in order to place them in front of the customers and get them approved. This is done through a requirement specification document, which consists of all the product requirements to be designed and developed during the project lifecycle.

1.4.1.3. 3.Designing: System design helps in specifying hardware and system requirements and also helps in defining overall system architecture. The system design specifications serve as input for the next phase of the model. Threat modeling and secure design elements should be undertaken and discussed here.

1.4.1.4. 4.Developing: Upon receiving the system design documents, work is divided into modules/units and actual coding starts. This is typically the longest phase of the software development lifecycle. Activities include code review, unit testing, and static analysis.

1.4.1.5. 5.Testing: After the code is developed, it is tested against the requirements to make sure that the product is actually solving the needs gathered during the requirements phase. During this phase, unit testing, integration testing, system testing, and acceptance testing are all conducted.

1.4.2. SECURE OPERATIONS PHASE

1.4.2.1. Proper software configuration management and versioning is essential to application security. There are some tools

1.4.2.1.1. Puppet: Puppet is a configuration management system that allows you to define the state of your IT infrastructure and then automatically enforces the correct state.

1.4.2.1.2. Chef: With Chef, you can automate how you build, deploy, and manage your infrastructure. The Chef server stores your recipes as well as other configuration data. The Chef client is installed on each server, virtual machine, container, or networking device you manage (called nodes). The client periodically polls the Chef server for the latest policy and the state of your network. If anything on the node is out of date, the client brings it up to date.

1.4.2.2. Activities

1.4.2.2.1. Dynamic analysis

1.4.2.2.2. Vulnerability assessments and penetration testing (as part of a continuous monitoring plan)

1.4.2.2.3. Activity monitoring

1.4.2.2.4. Layer-7 firewalls (e.g., web application firewalls)

1.4.3. DISPOSAL PHASE

1.4.3.1. Challenge: ensure that data is properly disposed

1.4.3.1.1. Crypto-shredding is effectively summed up as the deletion of the key used to encrypt data that’s stored in the cloud.

1.5. Assessing Common Vulnerabilities

1.5.1. (OWASP) Top 10

1.5.1.1. “Injection: Includes injection flaws such as SQL, OS, LDAP, and other injections. These occur when untrusted data is sent to an interpreter as part of a command or query. If the interpreter is successfully tricked, it will execute the unintended commands or access data without proper authorization.

1.5.1.2. “Broken authentication and session management: Application functions related to authentication and session in management are often not implemented correctly, allowing attackers to compromise passwords, keys, or session tokens or to exploit other implementation flaws to assume other users’ identities.

1.5.1.3. “Cross-site scripting (XSS): XSS flaws occur whenever an application takes untrusted data and sends it to a web browser without proper validation or escaping. XSS allows attackers to execute scripts in the victim’s browser, which can hijack user sessions, deface websites, or redirect the user to malicious sites.

1.5.1.4. “Insecure direct object references: A direct object reference occurs when a developer exposes a reference to an internal implementation object, such as a file, directory, or database key. Without an access control check or other protection, attackers can manipulate these references to access unauthorized data.

1.5.1.5. “Security misconfiguration: Good security requires having a secure configuration defined and deployed for the application, frameworks, application server, web server, database server, and platform. Secure settings should be defined, implemented, and maintained, as defaults are often insecure. Additionally, software should be kept up to date.

1.5.1.6. “Sensitive data exposure: Many web applications do not properly protect sensitive data, such as credit cards, tax IDs, and authentication credentials. Attackers may steal or modify such weakly protected data to conduct credit card fraud, identity theft, or other crimes. Sensitive data deserves extra protection, such as encryption at rest or in transit, as well as special precautions when exchanged with the browser.

1.5.1.7. “Missing function-level access control: Most web applications verify function-level access rights before making that functionality visible in the UI. However, applications need to perform the same access control checks on the server when each function is accessed. If requests are not verified, attackers will be able to forge requests in order to access functionality without proper authorization.

1.5.1.8. “Cross-site request forgery (CSRF): A CSRF attack forces a logged-on victim’s browser to send a forged HTTP request, including the victim’s session cookie and any other automatically included authentication information, to a vulnerable web application. This allows the attacker to force the victim’s browser to generate requests that the vulnerable application thinks are legitimate requests from the victim.

1.5.1.9. “Using components with known vulnerabilities: Components, such as libraries, frameworks, and other software modules, almost always run with full privileges. If a vulnerable component is exploited, such an attack can facilitate serious data loss or server takeover. Applications using components with known vulnerabilities may undermine application defenses and enable a range of possible attacks and impacts.

1.5.1.10. “Invalidated redirects and forwards: Web applications frequently redirect and forward users to other pages and websites, and use untrusted data to determine the destination pages. Without proper validation, attackers can redirect victims to phishing or malware sites or use forwards to access unauthorized pages.”

1.5.2. NIST Framework for Improving Critical Infrastructure Cybersecurity

1.5.2.1. Parts

1.5.2.1.1. Framework Core: Cybersecurity activities and outcomes divided into five functions: Identify, Protect, Detect, Respond, and Recover

1.5.2.1.2. Framework Profile: To help the company align activities with business requirements, risk tolerance, and resources

1.5.2.1.3. Framework Implementation Tiers: To help organizations categorize where they are with their approach

1.5.2.2. Framework provides a common taxonomy and mechanism for organizations to

1.5.2.2.1. Describe their current cybersecurity posture

1.5.2.2.2. Describe their target state for cybersecurity

1.5.2.2.3. Identify and prioritize opportunities for improvement within the context of a continuous and repeatable process

1.5.2.2.4. Assess progress toward the target state

1.5.2.2.5. Communicate among internal and external stakeholders about cybersecurity risk

1.6. Cloud-Specific Risks

1.6.1. Applications that run in a PaaS environment may need security controls baked into them

1.6.1.1. encryption may be needed to be programmed into applications

1.6.1.2. logging may be difficult depending on what the cloud service provider can offer your organization

1.6.1.3. ensure that one application cannot access other applications on the platform unless it’s allowed access through a control

1.6.2. CSA: The Notorious Nine: Cloud Computing Top Threats in 2013

1.6.2.1. Data breaches: If a multi-tenant cloud service database is not properly designed, a flaw in one client’s application could allow an attacker access not only to that client’s data but to every other client’s data as well.

1.6.2.2. Data loss: Any accidental deletion by the cloud service provider, or worse, a physical catastrophe such as a fire or earthquake, could lead to the permanent loss of customers’ data unless the provider takes adequate measures to back up data. Furthermore, the burden of avoiding data loss does not fall solely on the provider’s shoulders. If a customer encrypts his or her data before uploading it to the cloud but loses the encryption key, the data will be lost as well.

1.6.2.3. Account hijacking: If attackers gain access to your credentials, they can eavesdrop on your activities and transactions, manipulate data, return falsified information, and redirect your clients to illegitimate sites. Your account or service instances may become a new base for the attacker.

1.6.2.4. Insecure APIs: Cloud computing providers expose a set of software interfaces or APIs that customers use to manage and interact with cloud services. Provisioning, management, orchestration, and monitoring are all performed using these interfaces. The security and availability of general cloud services is dependent on the security of these basic APIs. From authentication and access control to encryption and activity monitoring, these interfaces must be designed to protect against both accidental and malicious attempts to circumvent policy.

1.6.2.5. Denial of service: By forcing the victim cloud service to consume inordinate amounts of finite system resources such as processor power, memory, disk space, or network bandwidth, the attacker causes an intolerable system slowdown

1.6.2.6. Malicious insiders: CERN defines an insider threat as “A current or former employee, contractor, or other business partner who has or had authorized access to an organization’s network, system, or data and intentionally exceeded or misused that access in a manner that negatively affected the confidentiality, integrity, or availability of the organization’s information or information systems.”

1.6.2.7. Abuse of cloud services: It might take an attacker years to crack an encryption key using his own limited hardware, but using an array of cloud servers, he might be able to crack it in minutes. Alternately, he might use that array of cloud servers to stage a DDoS attack, serve malware, or distribute pirated software.

1.6.2.8. Insufficient due diligence: Too many enterprises jump into the cloud without understanding the full scope of the undertaking. Without a complete understanding of the CSP environment, applications, or services being pushed to the cloud, and operational responsibilities such as incident response, encryption, and security monitoring, organizations are taking on unknown levels of risk in ways they may not even comprehend but that are a far departure from their current risks.

1.6.2.9. Shared technology issues: Whether it’s the underlying components that make up this infrastructure (CPU caches, GPUs, etc.) that were not designed to offer strong isolation properties for a multi-tenant architecture (IaaS), re-deployable platforms (PaaS), or multi-customer applications (SaaS), the threat of shared vulnerabilities exists in all delivery models. A defensive in-depth strategy is recommended and should include compute, storage, network, application and user security enforcement, and monitoring, whether the service model is IaaS, PaaS, or SaaS. The key is that a single vulnerability or misconfiguration can lead to a compromise across an entire provider’s cloud.

1.7. Threat Modeling

1.7.1. Threat modeling is performed once an application design is created. The goal of threat modeling is to determine any weaknesses in the application and the potential ingress, egress, and actors involved before it is introduced to production.

1.7.2. STRIDE THREAT MODEL

1.7.2.1. Spoofing: Attacker assumes identity of subject

1.7.2.2. Tampering: Data or messages are altered by an attacker

1.7.2.3. Repudiation: Illegitimate denial of an event

1.7.2.4. Information disclosure: Information is obtained without authorization

1.7.2.5. Denial of service: Attacker overloads system to deny legitimate access

1.7.2.6. Elevation of privilege: Attacker gains a privilege level above what is permitted

1.7.3. APPROVED APPLICATION PROGRAMMING INTERFACES (APIS)

1.7.3.1. Benefits of API

1.7.3.1.1. Programmatic control and access

1.7.3.1.2. Automation

1.7.3.1.3. Integration with third-party tools

1.7.3.2. CSP must ensure that there is a formal approval process in place for all APIs (internal and external)

1.7.4. SOFTWARE SUPPLY CHAIN (API) MANAGEMENT

1.7.4.1. Consuming software that is being developed by a third party or accessed with or through third-party libraries to create or enable functionality, without having a clear understanding of the origins of the software and code in question leads to a situation where there is complex and highly dynamic software interaction taking place between and among one or more services and systems within the organization and between organizations via the cloud.

1.7.4.2. It is important to assess all code and services for proper and secure functioning no matter where they are sourced

1.7.5. SECURING OPEN SOURCE SOFTWARE

1.7.5.1. Software that has been openly tested and reviewed by the community at large is considered by many security professionals to be more secure than software that has not undergone such a process.

1.8. Identity and Access Management (IAM)

1.8.1. Identity and Access Management (IAM) includes people, processes, and systems that are used to manage access to enterprise resources by ensuring that the identity of an entity is verified and then granting the correct level of access based on the protected resource, this assured identity, and other contextual information

1.8.2. IDENTITY MANAGEMENT

1.8.2.1. Identity management is a broad administrative area that deals with identifying individuals in a system and controlling their access to resources within that system by associating user rights and restrictions with the established identity.

1.8.3. ACCESS MANAGEMENT

1.8.3.1. Authentication identifies the individual and ensures that he is who he claims to be. It establishes identity by asking, “Who are you?” and “How do I know I can trust you?”

1.8.3.2. Authorization evaluates “What do you have access to?” after authentication occurs.

1.8.3.3. Policy management establishes the security and access policies based on business needs and degree of acceptable risk.

1.8.3.4. Federation is an association of organizations that come together to exchange information as appropriate about their users and resources in order to enable collaborations and transactions

1.8.3.4.1. Federated Identity Management

1.8.3.5. Identity repository includes the directory services for the administration of user account attributes.

1.9. Multi-Factor Authentication

1.9.1. adds an extra level of protection to verify the legitimacy of a transaction.

1.9.2. What they know (e.g., password)

1.9.3. What they have (e.g., display token with random numbers displayed)

1.9.4. What they are (e.g., biometrics)

1.9.5. Step-up authentication is an additional factor or procedure that validates a user’s identity, normally prompted by high-risk transactions or violations according to policy rules. Methods:

1.9.5.1. Challenge questions

1.9.5.2. Out-of-band authentication (a call or SMS text message to the end user)

1.9.5.3. Dynamic knowledge-based authentication (questions unique to the end user)

1.10. Supplemental Security Devices

1.10.1. used to add additional elements and layers to a defense-in-depth architecture.

1.10.2. WAF

1.10.2.1. A Web Application Firewall (WAF) is a layer-7 firewall that can understand HTTP traffic.

1.10.2.2. A cloud WAF can be extremely effective in the case of a denial-of-service (DoS) attack; several cases exist where a cloud WAF was used to successfully thwart DoS attacks of 350Gbs and 450Gbs.

1.10.3. DAM

1.10.3.1. Database Activity Monitoring (DAM) is a layer-7 monitoring device that understands SQL commands.

1.10.3.2. DAM can be agent-based (ADAM) or network-based (NDAM).

1.10.3.3. A DAM can be used to detect and stop malicious commands from executing on an SQL server.

1.10.4. XML

1.10.4.1. XML gateways transform how services and sensitive data are exposed as APIs to developers, mobile users, and cloud users.

1.10.4.2. XML gateways can be either hardware or software.

1.10.4.3. XML gateways can implement security controls such as DLP, antivirus, and anti-malware services.

1.10.5. Firewalls

1.10.5.1. Firewalls can be distributed or configured across the SaaS, PaaS, and IaaS landscapes; these can be owned and operated by the provider or can be outsourced to a third party for the ongoing management and maintenance.

1.10.5.2. Implementation of firewalls in the cloud will need to be installed as software components (e.g., host-based firewall).

1.10.6. API Gateway

1.10.6.1. An API gateway is a device that filters API traffic; it can be installed as a proxy or as a specific part of your applications stack before data is processed.

1.10.6.2. API gateway can implement access control, rate limiting, logging, metrics, and security filtering.

1.11. Cryptography

1.11.1. In Transit

1.11.1.1. Transport Layer Security (TLS): A protocol that ensures privacy between communicating applications and their users on the Internet.

1.11.1.2. Secure Sockets Layer: The standard security technology for establishing an encrypted link between a web server and a browser. This link ensures that all data passed between the web server and browsers remain private and integral.

1.11.1.3. VPN (e.g., IPSEC gateway): A network that is constructed by using public wires—usually the Internet—to connect to a private network, such as a company’s internal network.

1.11.2. At rest

1.11.2.1. Whole instance encryption: A method for encrypting all of the data associated with the operation and use of a virtual machine, such as the data stored at rest on the volume, disk I/O, and all snapshots created from the volume, as well as all data in transit moving between the virtual machine and the storage volume.

1.11.2.2. Volume encryption: A method for encrypting a single volume on a drive. Parts of the hard drive will be left unencrypted when using this method. (Full disk encryption should be used to encrypt the entire contents of the drive, if that is what is desired).

1.11.2.3. File/directory encryption: A method for encrypting a single file/directory on a drive.

1.11.3. There are times when the use of encryption may not be the most appropriate or functional choice for a system protection element, due to design, usage, and performance concerns. As a result, additional technologies and approaches become necessary

1.11.3.1. Tokenization generates a token (often a string of characters) that is used to substitute sensitive data, which is itself stored in a secured location such as a database.

1.11.3.2. Data masking is a technology that keeps the format of a data string but alters the content.

1.11.3.3. Sandbox isolates and utilizes only the intended components, while having appropriate separation from the remaining components (i.e., the ability to store personal information in one sandbox, with corporate information in another sandbox). Within cloud environments, sandboxing is typically used to run untested or untrusted code in a tightly controlled environment.

1.12. Application Virtualization

1.12.1. creates an encapsulation from the underlying operating system.

1.12.2. Examples

1.12.2.1. “Wine” allows for some Microsoft applications to run on a Linux platform.

1.12.2.2. Windows XP mode in Windows 7

1.12.3. Assurance and validation techniques

1.12.3.1. Software assurance: Software assurance encompasses the development and implementation of methods and processes for ensuring that software functions as intended while mitigating the risks of vulnerabilities, malicious code, or defects that could bring harm to the end user.

1.12.3.2. Verification and validation: In order for project and development teams to have confidence and to follow best practice guidelines, verification and validation of coding at each stage of the development process are required. Coupled with relevant segregation of duties and appropriate independent review, verification and validation look to ensure that the initial concept and delivered product is complete.

1.12.3.2.1. verify that requirements are specified and measurable

1.12.3.2.2. test plans and documentation are comprehensive and consistently applied to all modules and subsystems and integrated with the final product.

1.12.3.2.3. Verification and validation should be performed at each stage of the SDLC and in line with change management components.

1.13. Cloud-Based Functional Data

1.13.1. the data collected, processed, and transferred by the separate functions of the application can have separate legal implications depending on how that data is used, presented, and stored.

1.13.2. Breaking down systems to the functions and services that have legal implications from those that don’t is essential to the overall security posture of your cloud-based systems and overall enterprise need to meet contractual, legal, and regulatory requirements.

1.14. Cloud-Secure Development Lifecycle

1.14.1. the purpose of a cloud-secure development lifecycle: Understanding that security must be “baked in” from the very onset of an application being created/consumed by an organization leads to a higher reasonable assurance that applications are properly secured prior to being used by an organization

1.14.2. ISO/IEC 27034-1

1.14.2.1. “Information Technology – Security Techniques – Application Security.”: defines concepts, frameworks, and processes to help organizations integrate security within their software development lifecycle.

1.14.2.2. ORGANIZATIONAL NORMATIVE FRAMEWORK (ONF)

1.14.2.2.1. Business context: Includes all application security policies, standards, and best practices adopted by the organization

1.14.2.2.2. Regulatory context: Includes all standards, laws, and regulations that affect application security

1.14.2.2.3. Technical context: Includes required and available technologies that are applicable to application security

1.14.2.2.4. Specifications: Documents the organization’s IT functional requirements and the solutions that are appropriate to address these requirements

1.14.2.2.5. Roles, responsibilities, and qualifications: Documents the actors within an organization who are related to IT applications

1.14.2.2.6. Processes: Related to application security

1.14.2.2.7. Application security control library: Contains the approved controls that are required to protect an application based on the identified threats, the context, and the targeted level of trust

1.14.2.3. APPLICATION NORMATIVE FRAMEWORK (ANF)

1.14.2.3.1. The ANF maintains the applicable portions of the ONF that are needed to enable a specific application to achieve a required level of security or the targeted level of trust. The ONF to ANF is a one-to-many relationship, where one ONF will be used as the basis to create multiple ANFs.

1.14.2.4. APPLICATION SECURITY MANAGEMENT PROCESS (ASMP)

1.14.2.4.1. ASMPmmanages and maintains each ANF

1.14.2.4.2. Specifying the application requirements and environment

1.14.2.4.3. Assessing application security risks

1.14.2.4.4. Creating and maintaining the ANF

1.14.2.4.5. Provisioning and operating the application

1.14.2.4.6. Auditing the security of the application

1.15. Application Security Testing

1.15.1. STATIC APPLICATION SECURITY TESTING (SAST)

1.15.1.1. a white-box test, where an analysis of the application source code, byte code, and binaries is performed by the application test without executing the application code.

1.15.1.2. Goal: determine coding errors and omissions that are indicative of security vulnerabilities

1.15.1.3. SAST can be used to find cross-site scripting errors, SQL injection, buffer overflows, unhandled error conditions, as well as potential back doors.

1.15.1.4. SAST typically delivers more comprehensive results than those found using Dynamic Application Security Testing (DAST)

1.15.2. DYNAMIC APPLICATION SECURITY TESTING (DAST)

1.15.2.1. a black-box test, where the tool must discover individual execution paths in the application being analyzed.

1.15.2.2. DAST is mainly considered effective when testing exposed HTTP and HTML interfaces of web applications.

1.15.3. RUNTIME APPLICATION SELF PROTECTION (RASP)

1.15.3.1. is generally considered to focus on applications that possess self-protection capabilities built into their runtime environments, which have full insight into application logic, configuration, and data and event flows.

1.15.4. VULNERABILITY ASSESSMENTS AND PENETRATION TESTING

1.15.4.1. both play a significant role and support security of applications and systems prior to an application going into and while in a production environment.

1.15.4.2. Vulnerability assessments are often performed as white-box tests, where the assessor knows that application and they have complete knowledge of the environment the application runs in.

1.15.4.3. Penetration testing is a process used to collect information related to system vulnerabilities and exposures, with the view to actively exploit the vulnerabilities in the system. Penetration testing is often a black-box test

1.15.4.4. SaaS providers are most likely not to grant permission for penetration tests to occur by clients. Generally, only a SaaS provider’s resources will be permitted to perform penetration tests on the SaaS application.

1.15.5. SECURE CODE REVIEWS

1.15.5.1. informal

1.15.5.1.1. one or more individuals examining sections of the code, looking for vulnerabilities.

1.15.5.2. formal

1.15.5.2.1. trained teams of reviewers that are assigned specific roles as part of the review process, as well as the use of a tracking system to report on vulnerabilities found.

1.15.6. OPEN WEB APPLICATION SECURITY PROJECT (OWASP) RECOMMENDATIONS

1.15.6.1. Identity management testing

1.15.6.2. Authentication testing

1.15.6.3. Authorization testing

1.15.6.4. Session management testing

1.15.6.5. Input validation testing

1.15.6.6. Testing for error handling

1.15.6.7. Testing for weak cryptography

1.15.6.8. Business logic testing

1.15.6.9. Client-side testing

2. Operations

2.1. Modern Datacenters and Cloud Service Offerings

2.1.1. providers are to take into account the challenges and complexities associated with differing outlooks, drivers, requirements, and services.

2.2. Factors That Impact Datacenter Design

2.2.1. legal and regulatory requirements because the geographic location of the datacenter impacts its jurisdiction

2.2.2. contingency, failover, and redundancy involving other datacenters in different locations are important to understand

2.2.3. the type of services (PaaS, IaaS, and SaaS) the cloud

2.2.4. automating service enablement

2.2.5. consolidation of monitoring capabilities

2.2.6. reducing mean time to repair (MTTR)

2.2.7. reducing mean time between failure (MTBF)

2.2.8. LOGICAL DESIGN

2.2.8.1. All logical design decisions should be mapped to specific compliance requirements, such as logging, retention periods, and reporting capabilities for auditing. There also needs to be ongoing monitoring systems designed to enhance effectiveness.

2.2.8.2. Multi-Tenancy

2.2.8.2.1. The multi-tenant nature of a cloud deployment requires a logical design that partitions and segregates client/customer data.

2.2.8.2.2. Multi-tenant networks, in a nutshell, are datacenter networks that are logically divided into smaller, isolated networks. They share the physical networking gear but operate on their own network without visibility into the other logical networks.

2.2.8.3. Cloud Management Plane

2.2.8.3.1. The cloud management plane needs to be logically isolated although physical isolation may offer a more secure solution. It provides:

2.2.8.4. Virtualization Technology

2.2.8.4.1. Communications access (permitted and not permitted), user access profiles, and permissions, including API access

2.2.8.4.2. Secure communication within and across the management plane

2.2.8.4.3. Secure storage (encryption, partitioning, and key management)

2.2.8.4.4. Backup and disaster recovery along with failover and replication

2.2.8.5. Other Logical Design Considerations

2.2.8.5.1. Design for segregation of duties so datacenter staff can access only the data needed to do their job.

2.2.8.5.2. Design for monitoring of network traffic. The management plane should also be monitored for compromise and abuse. Hypervisor and virtualization technology need to be considered when designing the monitoring capability. Some hypervisors may not allow enough visibility for adequate monitoring. The level of monitoring will depend on the type of cloud deployment.

2.2.8.5.3. Automation and the use of APIs are essential for a successful cloud deployment. The logical design should include the secure use of APIs and a method to log API use.

2.2.8.5.4. Logical design decisions should be enforceable and monitored. For example, access control should be implemented with an identity and access management system that can be audited.

2.2.8.5.5. Consider the use of software-defined networking tools to support logical isolation.

2.2.8.6. Logical Design Levels

2.2.8.6.1. Logical design for data separation needs to be incorporated at the following levels

2.2.8.7. Service Model

2.2.8.7.1. IaaS, many of the hypervisor features can be used to design and implement security

2.2.8.7.2. PaaS, logical design features of the underling platform and database can be leveraged to implement security

2.2.8.7.3. SaaS, same as above plus additional measures in the application can be used to enhance security

2.2.9. PHYSICAL DESIGN

2.2.9.1. Considerations

2.2.9.1.1. Does the physical design protect against environmental threats such as flooding, earthquakes, and storms?

2.2.9.1.2. Does the physical design include provisions for access to resources during disasters to ensure the datacenter and its personnel can continue to operate safely? Examples include

2.2.9.1.3. Are there physical security design features that limit access to authorized personnel? Some examples include

2.2.9.2. Building or Buying

2.2.9.2.1. If you build the datacenter, the organization will have the most control over the design and security of it. However, there is a significant investment required to build a robust datacenter.

2.2.9.2.2. Buying a datacenter or leasing space in a datacenter may be a cheaper alternative. With this option, there may be limitations on design inputs. The leasing organization will need to include all security requirements in the RFP and contract.

2.2.9.2.3. When using a shared datacenter, physical separation of servers and equipment will need to be included in the design.

2.2.9.3. Datacenter Design Standards

2.2.9.3.1. BICSI (Building Industry Consulting Service International Inc.):The ANSI/BICSI 002-2014 standard covers cabling design and installation

2.2.9.3.2. IDCA The (International Datacenter Authority): The Infinity Paradigm covers datacenter location, facility structure, and infrastructure and applications

2.2.9.3.3. NFPA (The National Fire Protection Association): NFPA 75 and 76 standards specify how hot/cold aisle containment is to be carried out, and NFPA standard 70 requires the implementation of an emergency power off button to protect first responders in the datacenter in case of emergency

2.2.9.3.4. Uptime Institute’s Datacenter Site Infrastructure Tier Standard Topology

2.2.10. ENVIRONMENTAL DESIGN CONSIDERATIONS

2.2.10.1. Temperature and Humidity Guidelines

2.2.10.1.1. The American Society of Heating, Refrigeration, and Air Conditioning Engineers (ASHRAE)

2.2.10.1.2. Temperature control locations

2.2.10.2. HVAC Considerations

2.2.10.2.1. the lower the temperature in the data center is, the greater the cooling costs per month will be

2.2.10.3. Air Management for Datacenters

2.2.10.3.1. all the design and configuration details minimize or eliminate mixing between the cooling air supplied to the equipment and the hot air rejected from the equipment

2.2.10.3.2. key design issues: configuration of

2.2.10.4. Cable Management

2.2.10.4.1. Under-floor and over-head obstructions, which often interfere with the distribution of cooling air. Such interferences can significantly reduce the air handlers’ airflow and negatively affect the air distribution.

2.2.10.4.2. Cable congestion in raised-floor plenums, which can sharply reduce the total airflow as well as degrade the airflow distribution through the perforated floor tiles.

2.2.10.4.3. Instituting a cable mining program (i.e., a program to remove abandoned or inoperable cables) as part of an ongoing cable management plan will help optimize the air delivery performance of datacenter cooling systems.

2.2.10.5. Aisle Separation and Containment

2.2.10.5.1. Strict hot aisle/cold aisle configurations can significantly increase the air-side cooling capacity of a datacenter’s cooling system

2.2.10.5.2. The rows of racks are placed back-to-back, and holes through the rack (vacant equipment slots) are blocked off on the intake side to create barriers that reduce recirculation. Additionally, cable openings in raised floors and ceilings should be sealed as tightly as possible.

2.2.10.5.3. One recommended design configuration supplies cool air via an under-floor plenum to the racks; the air then passes through the equipment in the rack and enters a separated, semi-sealed area for return to an overhead plenum

2.2.10.6. HVAC Design Considerations

2.2.10.6.1. The local climate will impact the HVAC design requirements.

2.2.10.6.2. Redundant HVAC systems should be part of the overall design.

2.2.10.6.3. The HVAC system should provide air management that separates the cool air from the heat exhaust of the servers.

2.2.10.6.4. Consideration should be given to energy efficient systems

2.2.10.6.5. Backup power supplies should be provided to run the HVAC system for the amount of time required for the system to stay up.

2.2.10.6.6. The HVAC system should filter contaminants and dust.

2.2.11. MULTI-VENDOR PATHWAY CONNECTIVITY (MVPC)

2.2.11.1. There should be redundant connectivity from multiple providers into the datacenter. This will help prevent a single point of failure for network connectivity.

2.2.11.2. The redundant path should provide the minimum expected connection speed for datacenter operations.

2.2.12. IMPLEMENTING PHYSICAL INFRASTRUCTURE FOR CLOUD ENVIRONMENTS

2.2.12.1. Cloud computing removes the traditional silos within the datacenter and introduces a new level of flexibility and scalability to the IT organization.

2.3. Enterprise Operations

2.3.1. Large enterprises need to isolate HR records, finance, customer credit card details, and so on.

2.3.2. Resources externally exposed for out-sourced projects require separation from internal corporate environments

2.3.3. Healthcare organizations must ensure patient record confidentiality.

2.3.4. Universities need to partition student user services from business operations, student administrative systems, and commercial or sensitive research projects.

2.3.5. Service providers must separate billing, CRM, payment systems, reseller portals, and hosted environments.

2.3.6. Financial organizations need to securely isolate client records and investment, wholesale, and retail banking services.

2.3.7. Government agencies must partition revenue records, judicial data, social services, operational systems, and so on.

2.4. Secure Configuration of Hardware

2.4.1. Private and public cloud providers must enable all customer data, communication, and application environments to be securely separated, protected, and isolated from other tenants. To accomplish these goals, all hardware inside the datacenter will need to be securely configured. This includes:

2.4.1.1. BEST PRACTICES FOR SERVERS

2.4.1.1.1. Secure build: To implement fully, follow the specific recommendations of the operating system vendor to securely deploy their operating system.

2.4.1.1.2. Secure initial configuration: This may mean many different things depending on a number of variables, such as OS vendor, operating environment, business requirements, regulatory requirements, risk assessment, and risk appetite, as well as workload(s) to be hosted on the system

2.4.1.1.3. Secure ongoing configuration maintenance: Achieved through a variety of mechanisms, some vendor-specific, some not.

2.4.1.2. BEST PRACTICES FOR STORAGE CONTROLLERS

2.4.1.2.1. Initiator: The consumer of storage, typically a server with an adapter card in it called a Host Bus Adapter (HBA). The initiator “initiates” a connection over the fabric to one or more ports on your storage system, which are called target ports.

2.4.1.2.2. Target: The ports on your storage system that deliver storage volumes (called target devices or LUNs) to the initiators.

2.4.1.2.3. iSCSI traffic should be segregated from general traffic. Layer-2 VLANs are a particularly good way to implement this segregation.

2.4.1.2.4. Oversubscription is permissible on general-purpose LANs, but you should not use an oversubscribed configuration for iSCSI.

2.4.1.2.5. iSCSI Implementation Considerations

2.4.1.3. NETWORK CONTROLLERS BEST PRACTICES

2.4.1.3.1. Major differences between physical and virtual switches

2.4.1.3.2. With a physical switch, when a dedicated network cable or switch port goes bad, only one server goes down

2.4.1.3.3. with virtualization, one cable could offer connectivity to 10 or more virtual machines (VMs), causing a loss in connectivity to multiple VMs.

2.4.1.3.4. connecting multiple VMs requires more bandwidth, which must be handled by the virtual switch.

2.4.1.4. VIRTUAL SWITCHES BEST PRACTICES

2.4.1.4.1. Redundancy is achieved by assigning at least two physical NICs to a virtual switch with each NIC connecting to a different physical switch.

2.4.1.4.2. Network Isolation

2.4.1.4.3. The network that is used to move live virtual machines from one host to another does so in clear text. That means it may be possible to “sniff” the data or perform a man-in-the-middle attack when a live migration occurs.

2.4.1.4.4. When dealing with internal and external networks, always create a separate isolated virtual switch with its own physical network interface cards and never mix internal and external traffic on a virtual switch.

2.4.1.4.5. Lock down access to your virtual switches so that an attacker cannot move VMs from one network to another and so that VMs do not straddle an internal and external network.

2.4.1.4.6. For a better virtual network security strategy, use security applications that are designed specifically for virtual infrastructure and integrate them directly into the virtual networking layer. This includes network intrusion detection and prevention systems, monitoring and reporting systems, and virtual firewalls that are designed to secure virtual switches and isolate VMs. You can integrate physical and virtual network security to provide complete datacenter protection.

2.4.1.4.7. If you use network-based storage such as iSCSI or Network File System, use proper authentication. For iSCSI, bidirectional Challenge-Handshake Authentication Protocol (or CHAP) authentication is best. Be sure to physically isolate storage network traffic because the traffic is often sent as clear text. Anyone with access to the same network could listen and reconstruct files, alter traffic, and possibly corrupt the network.

2.5. Installation and Configuration of Virtualization Management Tools for the Host

2.5.1. The virtualization platform will determine what management tools need to be installed on the host. The latest tools should be installed on each host, and the configuration management plan should include rules on updating these tools.

2.5.2. LEADING PRACTICES

2.5.2.1. Defense in depth: Implement the tool(s) used to manage the host as part of a larger architectural design that mutually reinforces security at every level of the enterprise. The tool(s) should be seen as a tactical element of host management, one that is linked to operational elements such as procedures and strategic elements such as policies.

2.5.2.2. Access control: Secure the tool(s) and tightly control and monitor access to them.

2.5.2.3. Auditing/monitoring: Monitor and track the use of the tool(s) throughout the enterprise to ensure proper usage is taking place.

2.5.2.4. Maintenance: Update and patch the tool(s) as required to ensure compliance with all vendor recommendations and security bulletins.

2.5.3. RUNNING A PHYSICAL INFRASTRUCTURE FOR CLOUD ENVIRONMENTS

2.5.3.1. Considerations when sharing resources include

2.5.3.1.1. Legal: Simply by sharing the environment in the cloud, you may put your data at risk of seizure. Exposing your data in an environment shared with other companies could give the government “reasonable cause” to seize your assets because another company has violated the law.

2.5.3.1.2. Compatibility: Storage services provided by one cloud vendor may be incompatible with another vendor’s services should you decide to move from one to the other.

2.5.3.1.3. Control: If information is encrypted while passing through the cloud, does the customer or cloud vendor control the encryption/decryption keys? Make sure you control the encryption/decryption keys, just as if the data were still resident in the enterprise’s own servers.

2.5.3.1.4. Log data: As more and more mission-critical processes are moved to the cloud, SaaS suppliers will have to provide log data in a real-time, straightforward manner, probably for their administrators as well as their customers’ personnel. Since the SaaS provider’s logs are internal and not necessarily accessible externally or by clients or investigators, monitoring is difficult.

2.5.3.1.5. PCI-DSS access: Since access to logs is required for Payment Card Industry Data Security Standard (PCI-DSS) compliance and may be requested by auditors and regulators, security managers need to make sure to negotiate access to the provider’s logs as part of any service agreement.

2.5.3.1.6. Upgrades and changes: Cloud applications undergo constant feature additions. The speed at which applications change in the cloud will affect both the SDLC and security. A secure SDLC may not be able to provide a security cycle that keeps up with changes that occur so quickly.

2.5.3.1.7. Failover technology: Having proper failover technology is a component of securing the cloud that is often overlooked. The company can survive if a non-mission-critical application goes offline, but this may not be true for mission-critical applications.

2.5.3.1.8. Compliance: SaaS makes the process of compliance more complicated, since it may be difficult for a customer to discern where his data resides on a network controlled by the SaaS provider, or a partner of that provider, which raises all sorts of compliance issues of data privacy, segregation, and security.

2.5.3.1.9. Regulations: Compliance with government regulations are much more challenging in the SaaS environment. The data owner is still fully responsible for compliance.

2.5.3.1.10. Outsourcing: Outsourcing means losing significant control over data, and while this is not a good idea from a security perspective, the business ease and financial savings will continue to increase the usage of these services. You need to work with your company’s legal staff to ensure that appropriate contract terms are in place to protect corporate data and provide for acceptable service level agreements.

2.5.3.1.11. Placement of security: Cloud-based services will result in many mobile IT users accessing business data and services without traversing the corporate network. This will increase the need for enterprises to place security controls between mobile users and cloud-based services. Placing large amounts of sensitive data in a globally accessible cloud leaves organizations open to large, distributed threats. Attackers no longer have to come onto the premises to steal data, and they can find it all in the one “virtual” location.

2.5.3.1.12. Virtualization: Virtualization efficiencies in the cloud require virtual machines from multiple organizations to be co-located on the same physical resources. Although traditional datacenter security still applies in the cloud environment, physical segregation and hardware-based security cannot protect against attacks between virtual machines on the same server. Administrative access is through the Internet rather than the controlled and restricted direct or on-premises connection that is adhered to in the traditional datacenter model. This increases risk and exposure and will require stringent monitoring for changes in system control and access control restriction.

2.5.3.1.13. Virtual machine: The dynamic and fluid nature of virtual machines will make it difficult to maintain the consistency of security and ensure that records can be audited. The ease of cloning and distribution between physical servers could result in the propagation of configuration errors and other vulnerabilities. Proving the security state of a system and identifying the location of an insecure virtual machine will be challenging. The co-location of multiple virtual machines increases the attack surface and risk of virtual machine-to-virtual machine compromise.

2.5.3.1.14. Operating system and application files: Operating system and application files are on a shared physical infrastructure in a virtualized cloud environment and require system, file, and activity monitoring to provide confidence and auditable proof to enterprise customers that their resources have not been compromised or tampered with. In the cloud computing environment, the enterprise subscribes to cloud computing resources, and the responsibility for patching is the subscriber’s rather than the cloud computing vendor’s. The need for patch maintenance vigilance is imperative. Lack of due diligence in this regard could rapidly make the task unmanageable or impossible.

2.5.3.1.15. Data fluidity: Enterprises are often required to prove that their security compliance is in accord with regulations, standards, and auditing practices, regardless of the location of the systems at which the data resides. Data is fluid in cloud computing and may reside in on-premises physical servers, on-premises virtual machines, or off-premises virtual machines running on cloud computing resources, and this will require some rethinking on the part of auditors and practitioners alike.

2.5.4. CONFIGURING ACCESS CONTROL AND SECURE KVM

2.5.4.1. Isolated data channels: Located in each KVM port and make it impossible for data to be transferred between connected computers through the KVM.

2.5.4.2. Tamper-warning labels on each side of the KVM: These provide clear visual evidence if the enclosure has been compromised

2.5.4.3. Housing intrusion detection: Causes the KVM to become inoperable and the LEDs to flash repeatedly if the housing has been opened.

2.5.4.4. Fixed firmware: Cannot be reprogrammed, preventing attempts to alter the logic of the KVM.

2.5.4.5. Tamper-proof circuit board: It’s soldered to prevent component removal or alteration.

2.5.4.6. Safe buffer design: Does not incorporate a memory buffer, and the keyboard buffer is automatically cleared after data transmission, preventing transfer of keystrokes or other data when switching between computers.

2.5.4.7. Selective USB access: Only recognizes human interface device USB devices (such as keyboards and mice) to prevent inadvertent and insecure data transfer.

2.5.4.8. Push-button control: Requires physical access to KVM when switching between connected computers.

2.6. Securing the Network Configuration

2.6.1. NETWORK ISOLATION

2.6.1.1. All networks should be monitored and audited to validate separation.

2.6.1.2. All management of the datacenter systems should be done on isolated networks. Strong authentication methods should be used on the management network to validate identity and authorize usage

2.6.1.3. Access to the storage controllers should also be granted over isolated network components that are non-routable to prevent the direct download of stored data and to restrict the likelihood of unauthorized access or accidental discovery.

2.6.1.4. Customer access should be provisioned on isolated networks. This isolation can be implemented through the use of physically separate networks or via VLANs.

2.6.1.5. TLS and IPSec can be used for securing communications in order to prevent eavesdropping.

2.6.1.6. Secure DNS (DNSSEC) should be used to prevent DNS poisoning.

2.6.2. PROTECTING VLANS

2.6.2.1. VLAN Communication

2.6.2.1.1. Broadcast packets sent by one of the workstations will reach all the others in the VLAN.

2.6.2.1.2. Broadcasts sent by one of the workstations in the VLAN will not reach any workstations that are not in the VLAN.

2.6.2.1.3. Broadcasts sent by workstations that are not in the VLAN will never reach workstations that are in the VLAN.

2.6.2.1.4. The workstations can all communicate with each other without needing to go through a gateway.

2.6.2.2. VLAN Advantages

2.6.2.2.1. The ability to isolate network traffic to certain machines or groups of machines via association with the VLAN allows for the opportunity to create secured pathing of data between endpoints

2.6.2.2.2. It is a building block that when combined with other protection mechanisms allows for data confidentiality to be achieved.

2.6.3. USING TRANSPORT LAYER SECURITY (TLS)

2.6.3.1. TLS is made up of two layers:

2.6.3.1.1. TLS record protocol: Provides connection security and ensures that the connection is private and reliable. Used to encapsulate higher-level protocols, among them TLS handshake protocol.

2.6.3.1.2. TLS handshake protocol: Allows the client and the server to authenticate each other and to negotiate an encryption algorithm and cryptographic keys before data is sent or received.

2.6.4. USING DOMAIN NAME SYSTEM (DNS)

2.6.4.1. Domain Name System Security Extensions (DNSSEC)

2.6.4.1.1. DNSSEC provides origin authority, data integrity, and authenticated denial-of-existence.

2.6.4.1.2. Validation of DNS responses occurs through the use of digital signatures that are included with DNS responses

2.6.4.2. Threats to the DNS Infrastructure

2.6.4.2.1. Footprinting: The process by which DNS zone data, including DNS domain names, computer names, and Internet Protocol (IP) addresses for sensitive network resources, is obtained by an attacker.

2.6.4.2.2. Denial-of-service attack: When an attacker attempts to deny the availability of network services by flooding one or more DNS servers in the network with queries.

2.6.4.2.3. Data modification: An attempt by an attacker to spoof valid IP addresses in IP packets that the attacker has created. This gives these packets the appearance of coming from a valid IP address in the network. With a valid IP address the attacker can gain access to the network and destroy data or conduct other attacks.

2.6.4.2.4. Redirection: When an attacker can redirect queries for DNS names to servers that are under the control of the attacker.

2.6.4.2.5. Spoofing: When a DNS server accepts and uses incorrect information from a host that has no authority giving that information. DNS spoofing is in fact malicious cache poisoning where forged data is placed in the cache of the name servers.

2.6.4.2.6. Cache poisoning: Attackers sometimes exploit vulnerabilities or poor configuration choices in DNS servers, bug, vulnerabilities in the DNS protocol itself -- to inject fraudulent addressing information into caches. Users accessing the cache to visit the targeted site would find themselves instead at a server controlled by the attacker.

2.6.4.2.7. Typosquatting: The practice of registering a domain name that is confusingly similar to an existing popular brand – typosquatting

2.6.5. USING INTERNET PROTOCOL SECURITY (IPSEC)

2.6.5.1. Supports

2.6.5.1.1. network-level peer authentication

2.6.5.1.2. data origin authentication

2.6.5.1.3. data integrity

2.6.5.1.4. encryption

2.6.5.1.5. replay protection

2.6.5.2. Challenges

2.6.5.2.1. Configuration management

2.6.5.2.2. Performance

2.7. Identifying and Understanding Server Threats

2.7.1. OS bugs, missconfiguration

2.7.2. Threat actors

2.7.3. General guidelines should be addressed when identifying and understanding threats

2.7.3.1. Use an asset management system that has configuration management capabilities to enable documentation of all system configuration items (CIs) authoritatively.

2.7.3.2. Use system baselines to enforce configuration management throughout the enterprise. In configuration management

2.7.3.2.1. A “baseline” is an agreed-upon description of the attributes of a product, at a point in time that serves as a basis for defining change.

2.7.3.2.2. A “change” is a movement from this baseline state to a next state.

2.7.3.2.3. Consider automation technologies that will help with the creation, application, management, updating, tracking, and compliance checking for system baselines.

2.7.3.2.4. Develop and use a robust change management system to authorize the required changes that need to be made to systems over time.

2.7.3.2.5. The use of an exception reporting system to force the capture and documentation of any activities undertaken that are contrary to the “expected norm” with regard to the lifecycle of a system under management.

2.7.3.2.6. The use of vendor-specified configuration guidance and best practices as appropriate based on the specific platform(s) under management.

2.8. Using Stand-Alone Hosts

2.8.1. The business seeks to

2.8.1.1. Create isolated, secured, dedicated hosting of individual cloud resources; the use of a stand-alone host would be an appropriate choice.

2.8.1.2. Make the cloud resources available to end users so they appear as if they are independent of any other resources and are “isolated”; either a stand-alone host or a shared host configuration that offers multi-tenant secured hosting capabilities would be appropriate

2.8.2. Stand-alone host availability considerations

2.8.2.1. Regulatory issues

2.8.2.2. Current security policies in force

2.8.2.3. Any contractual requirements that may be in force for one or more systems, or areas of the business

2.8.2.4. The needs of a certain application or business process that may be using the system in question

2.8.2.5. The classification of the data contained in the system

2.9. Using Clustered Hosts

2.9.1. RESOURCE SHARING

2.9.1.1. Reservations

2.9.1.2. Limits

2.9.1.3. Shares

2.9.2. DISTRIBUTED RESOURCE SCHEDULING (DRS)/COMPUTE RESOURCE SCHEDULING

2.9.2.1. Provide highly available resources to your workloads

2.9.2.2. Balance workloads for optimal performance

2.9.2.2.1. The initial workload placement across the cluster as a VM is powered on is the beginning point for all load-balancing operations.

2.9.2.2.2. Load balancing is achieved through a movement of the VM between hosts in the cluster in order to achieve/maintain the desired compute resource allocation thresholds specified for the DRS service.

2.9.2.3. Scale and manage computing resources without service disruption

2.10. Accounting for Dynamic Operation

2.10.1. In outsourced and public deployment models, cloud computing also can provide elasticity. This refers to the ability for customers to quickly request, receive, and later release as many resources as needed.

2.10.2. If an organization is large enough and supports a sufficient diversity of workloads, an on-site private cloud may be able to provide elasticity to clients within the consumer organization.

2.10.3. Smaller on-site private clouds will, exhibit maximum capacity limits similar to those of traditional datacenters.

2.11. Using Storage Clusters

2.11.1. CLUSTERED STORAGE ARCHITECTURES

2.11.1.1. A tightly coupled cluster has a physical backplane into which controller nodes connect. While this backplane fixes the maximum size of the cluster, it delivers a high-performance interconnect between servers for load-balanced performance and maximum scalability as the cluster grows.

2.11.1.2. A loosely coupled cluster offers cost-effective building blocks that can start small and grow as applications demand. A loose cluster offers performance, I/O, and storage capacity within the same node. As a result, performance scales with capacity and vice versa.

2.11.2. STORAGE CLUSTER GOALS

2.11.2.1. Meet the required service levels as specified in the SLA

2.11.2.2. Provide for the ability to separate customer data in multi-tenant hosting environments

2.11.2.3. Securely store and protect data through the use of confidentiality, integrity, and availability mechanisms such as encryption, hashing, masking, and multi-pathing

2.12. Using Maintenance Mode

2.12.1. Maintenance mode can apply to both data stores as well as hosts

2.12.2. Maintenance mode is tied to is the SLA.

2.12.3. Enter maintenance mode, operate within it, and exit it successfully using the vendor-specific guidance and best practices.

2.13. Providing High Availability on the Cloud

2.13.1. MEASURING SYSTEM AVAILABILITY

2.13.2. HIGH AVAILABILITY APPROACHES

2.13.2.1. the use of redundant architectural elements to safeguard data in case of failure, such as a drive mirroring solution.

2.13.2.2. the use of multiple vendors within the cloud architecture to provide the same services. This allows you to build certain systems that need a specified level of availability to be able to switch, or failover, to an alternate provider’s system within the specified time period defined in the SLA that is used to define and manage the availability window for the system.

2.14. The Physical Infrastructure for Cloud Environments

2.14.1. An infrastructure built for cloud computing provides numerous benefits

2.14.1.1. Flexible and efficient utilization of infrastructure investments

2.14.1.2. Faster deployment of physical and virtual resources

2.14.1.3. Higher application service levels

2.14.1.4. Less administrative overhead

2.14.1.5. Lower infrastructure, energy, and facility costs

2.14.1.6. Increased security

2.14.2. Servers

2.14.3. Virtualization

2.14.4. Storage

2.14.5. Network

2.14.6. Management

2.14.7. Security

2.14.8. Backup and recovery

2.14.9. Infrastructure systems

2.15. Configuring Access Control for Remote Access

2.15.1. Some of the threats with regard to remote access are as follows

2.15.1.1. Lack of physical security controls

2.15.1.2. Unsecured networks

2.15.1.3. Infected endpoints accessing the internal network

2.15.1.4. External access to internal resources

2.15.2. Controlling remote access

2.15.2.1. Tunneling via a VPN—IPSec or SSL

2.15.2.2. Remote Desktop Protocol (RDP) allows for desktop access to remote systems

2.15.2.3. Access via a secure terminal

2.15.2.4. Deployment of a DMZ

2.15.3. Cloud environment access requirements

2.15.3.1. Encrypted transmission of all communications between the remote user and the host

2.15.3.2. Secure login with complex passwords and/or certificate-based login

2.15.3.3. Two-factor authentication providing enhanced security

2.15.3.4. A log and audit of all connection

2.15.3.5. A secure baseline should be established, and all deployments and updates should be made from a change- and version-controlled master image.

2.15.3.6. Sufficient supporting infrastructure and tools should be in place to allow for the patching and maintenance of relevant infrastructure without any impact on the end user/customer.

2.16. Performing Patch Management

2.16.1. THE PATCH MANAGEMENT PROCESS

2.16.1.1. Vulnerability detection and evaluation by the vendor

2.16.1.2. Subscription mechanism to vendor patch notifications

2.16.1.3. Severity assessment of the patch by the receiving enterprise using that software

2.16.1.4. Applicability assessment of the patch on target systems

2.16.1.5. Opening of tracking records in case of patch applicability

2.16.1.6. Customer notification of applicable patches, if required

2.16.1.7. Change management

2.16.1.8. Successful patch application verification

2.16.1.9. Issue and risk management in case of unexpected troubles or conflicting actions

2.16.1.10. Closure of tracking records with all auditable artifacts

2.16.2. EXAMPLES OF AUTOMATION

2.16.2.1. Notification Automation

2.16.2.1.1. Vulnerability severity is assessed

2.16.2.1.2. A security patch or an interim solution is provided

2.16.2.1.3. This information is entered into a system

2.16.2.1.4. Automated e-mail notifications are sent to predefined accounts in a straightforward process

2.16.2.2. Security patch applicability

2.16.2.3. The creation of tracking records and their assignment to predefined resolver groups, in case of matching.

2.16.2.4. Change record creation, change approval, and change implementation (if agreed-upon maintenance windows have been established and are being managed via SLAs).

2.16.2.5. Verification of the successful implementation of security patches.

2.16.2.6. Creation of documentation to support that patching has been successfully accomplished.

2.16.3. CHALLENGES OF PATCH MANAGEMENT

2.16.3.1. The lack of service standardization. For enterprises transitioning to the cloud, lack of standardization is the main issue. For example, a patch management solution tailored to one customer often cannot be used or easily adopted by another customer.

2.16.3.2. Patch management is not simply using a patch tool to apply patches to endpoint systems, but rather, a collaboration of multiple management tools and teams, for example, change management and patch advisory tools.

2.16.3.3. In a large enterprise environment, patch tools need to be able to interact with a large number of managed entities in a scalable way and handle the heterogeneity that is unavoidable in such environments.

2.16.3.4. To avoid problems associated with automatically applying patches to endpoints, thorough testing of patches beforehand is absolutely mandatory.

2.16.3.5. Multiple Time Zones

2.16.3.5.1. In a cloud environment, virtual machines that are physically located in the same time zone can be configured to operate in different time zones. When a customer’s VMs span multiple time zones, patches need to be scheduled carefully so the correct behavior is implemented.

2.16.3.5.2. For some patches, the correct behavior is to apply the patches at the same local time of each virtual machine

2.16.3.5.3. For other patches, the correct behavior is to apply at the same absolute time to avoid mixed-mode problem where multiple versions of a software are concurrently running, resulting in data corruption.

2.16.3.6. VM Suspension and Snapshot

2.16.3.6.1. There are additional modes of operations available to system administrators and users, such as VM suspension and resume, snapshot, and revert back. The management console that allows use of these operations needs to be tightly integrated with the patch management and compliance processes.

2.17. Performance Monitoring

2.17.1. OUTSOURCING MONITORING

2.17.1.1. Having HR check references

2.17.1.2. Examining the terms of any SLA or contract being used to govern service terms

2.17.1.3. Executing some form of trial of the managed service in question before implementing into production

2.17.2. HARDWARE MONITORING

2.17.2.1. Extend monitoring of the four key subsystems

2.17.2.1.1. Network: Excessive dropped packets

2.17.2.1.2. Disk: Full disk or slow reads and writes to the disks (IOPS)

2.17.2.1.3. Memory: Excessive memory usage or full utilization of available memory allocation

2.17.2.1.4. CPU: Excessive CPU utilization

2.17.2.2. Additional items that exist in the physical plane of these systems, such as CPU temperature, fan speed, and ambient temperature within the datacenter hosting the physical hosts.

2.17.3. REDUNDANT SYSTEM ARCHITECTURE

2.17.3.1. Allow for additional hardware items to be incorporated directly into the system as either an online real-time component

2.17.3.2. Share the load of the running system, or in a hot standby mode

2.17.3.3. Allow for a controlled failover, to minimize downtime

2.17.4. MONITORING FUNCTIONS

2.17.4.1. The use of any vendor-supplied monitoring capabilities to their fullest extent is necessary in order to maximize system reliability and performance.

2.17.4.2. Monitoring hardware may provide early indications of hardware failure and should be treated as a requirement to ensure stability and availability of all systems being managed.

2.17.4.3. Some virtualization platforms offer the capability to disable hardware and migrate live data from the failing hardware if certain thresholds are met.

2.18. Backing Up and Restoring the Host Configuration challenges

2.18.1. Control: The ability to decide, with high confidence, who and what is allowed to access consumer data and programs and the ability to perform actions (such as erasing data or disconnecting a network) with high confidence both that the actions have been taken and that no additional actions were taken that would subvert the consumer’s intent

2.18.2. Visibility: The ability to monitor, with high confidence, the status of a consumer’s data and programs and how consumer data and programs are being accessed by others.

2.19. Implementing Network Security Controls: Defense in Depth

2.19.1. FIREWALLS

2.19.1.1. Host-Based Software Firewalls

2.19.1.2. Configuration of Ports Through the Firewall

2.19.2. LAYERED SECURITY

2.19.2.1. Intrusion Detection System

2.19.2.1.1. Network Intrusion Detection Systems (NIDSs)

2.19.2.1.2. Host Intrusion Detection Systems (HIDSs)

2.19.2.2. Intrusion Prevention System

2.19.2.2.1. It can reconfigure other security controls, such as a firewall or router, to block an attack; some IPS devices can even apply patches if the host has particular vulnerabilities.

2.19.2.2.2. Some IPS can remove the malicious contents of an attack to mitigate the packets, perhaps deleting an infected attachment from an e-mail before forwarding the e-mail to the user.

2.19.2.3. Combined IDS and IPS (IDPS)

2.19.3. UTILIZING HONEYPOTS

2.19.4. CONDUCTING VULNERABILITY ASSESSMENTS

2.19.4.1. conduct external vulnerability assessments to validate any internal assessments.

2.19.5. LOG CAPTURE AND LOG MANAGEMENT

2.19.5.1. Log data should be

2.19.5.1.1. Protected and consideration given to the external storage of log data

2.19.5.1.2. Part of the backup and disaster recovery plans of the organization

2.19.5.2. NIST SP 800-92 recommendations

2.19.5.2.1. Develop standard processes for performing log management.

2.19.5.2.2. Define its logging requirements and goals as part of the planning process.

2.19.5.2.3. Develop policies that clearly define mandatory requirements and suggested recommendations for log management activities, including log generation, transmission, storage, analysis, and disposal.

2.19.5.2.4. Ensure that related policies and procedures incorporate and support the log management requirements and recommendations.

2.19.5.3. Organizations should prioritize log management appropriately throughout the organization. After an organization defines its requirements and goals for the log management process, it should prioritize the requirements and goals based on the perceived reduction of risk and the expected time and resources needed to perform log management functions.

2.19.5.4. Organizations should create and maintain a log management infrastructure. A log management infrastructure consists of the hardware, software, networks, and media used to generate, transmit, store, analyze, and dispose of log data. They typically perform several functions that support the analysis and security of log data.

2.19.5.4.1. Major factors to consider in the design

2.19.5.5. Organizations should establish standard log management operational processes. The major log management operational processes typically include configuring log sources, performing log analysis, initiating responses to identified events, and managing long-term storage. Administrators have other responsibilities as well, such as the following:

2.19.5.5.1. Monitoring the logging status of all log sources

2.19.5.5.2. Monitoring log rotation and archival processes

2.19.5.5.3. Checking for upgrades and patches to logging software and acquiring, testing, and deploying them

2.19.5.5.4. Ensuring that each logging host’s clock is synched to a common time source

2.19.5.5.5. Reconfiguring logging as needed based on policy changes, technology changes, and other factors

2.19.5.5.6. Documenting and reporting anomalies in log settings, configurations, and processes

2.19.6. USING SECURITY INFORMATION AND EVENT MANAGEMENT (SIEM)

2.19.6.1. A locally hosted SIEM system offers easy access and lower risk of external disclosure

2.19.6.2. An external SIEM system may prevent tampering of data by an attacker

2.19.6.3. Sample Controls and Effective Mapping to an SIEM Solution https://www.cisecurity.org/critical-controls/download.cfm

2.19.6.3.1. Critical Control 1: Inventory of Authorized and Unauthorized Devices

2.19.6.3.2. Critical Control 2: Inventory of Authorized and Unauthorized Software

2.19.6.3.3. Critical Control 3: Secure Configurations for Hardware and Software on Laptops, Workstations, and Servers

2.19.6.3.4. Critical Control 10: Secure Configurations for Network Devices such as Firewalls, Routers, and Switches

2.19.6.3.5. Critical Control 12: Controlled Use of Administrative Privileges

2.19.6.3.6. Critical Control 13: Boundary Defense

2.20. Developing a Management Plan

2.20.1. MAINTENANCE

2.20.1.1. schedule system repair and maintenance

2.20.1.2. schedule customer notifications

2.20.1.3. ensure adequate resources are available to meet expected demand and service level agreement requirements

2.20.1.4. ensure that appropriate change-management procedures are implemented and followed

2.20.1.5. ensure all appropriate security protections and safeguards continue to apply to all hosts while in maintenance mode and to all virtual machines while they are being moved and managed on alternate hosts as a result of maintenance mode activities being performed on their primary host.

2.20.2. ORCHESTRATION

2.21. Building a Logical Infrastructure for Cloud Environments

2.21.1. LOGICAL DESIGN

2.21.1.1. Lacks specific details such as technologies and standards while focusing on the needs at a general level

2.21.1.2. Communicates with abstract concepts, such as a network, router, or workstation, without specifying concrete details

2.21.2. PHYSICAL DESIGN

2.21.2.1. Is created from a logical network design

2.21.2.2. Will often expand elements found in a logical design

2.21.3. SECURE CONFIGURATION OF HARDWARE-SPECIFIC REQUIREMENTS

2.21.3.1. Storage Controllers Configuration

2.21.3.1.1. Turn off all unnecessary services, such as web interfaces and management services that will not be needed or used.

2.21.3.1.2. Validate that the controllers can meet the estimated traffic load based on vendor specifications and testing (1 GB | 10 GB | 16 GB | 40 GB).

2.21.3.1.3. Deploy a redundant failover configuration such as a NIC team.

2.21.3.1.4. Deploy a multipath solution.

2.21.3.1.5. Change default administrative passwords for configuration and management access to the controller.

2.21.3.2. Networking Models

2.21.3.2.1. Traditional Networking Model