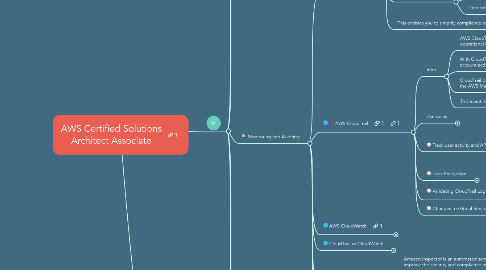

1. All

1.1. Global Infrastructure

1.1.1. Region

1.1.1.1. A Region is a geographical area. each AWS Region has multiple, isolated locations known as Availability Zones.

1.1.1.2. More Regions →

1.1.1.2.1. Better Disaster Recovery

1.1.1.2.2. Availability

1.1.1.2.3. Application Responsiveness

1.1.2. Availability Zones (AZ)

1.1.2.1. is a Data Center.

1.1.2.2. More AZs→ More Fault Tolerance

1.1.3. Edge Locations

1.1.3.1. Are endpoints for AWS which are used for caching content.

1.1.3.2. There are many more Edge Locations than Regions.

1.1.3.3. Currently there are over 96 Edge Locations.

1.1.3.4. Typically this consists of:

1.1.3.4.1. CloudFront,

1.1.3.4.2. Content Delivery Network (CDN)

1.1.4. My Exam Tips

1.1.4.1. Regions & AZ Codes Format

1.1.4.2. Disaster recovery always looks at ensuring resources are created in another region, not another regions

1.2. Identity and Access Management

1.2.1. IAM

1.2.1.1. Intro

1.2.1.1.1. AWS Identity and Access Management (IAM) enables you to manage access to AWS services and resources securely.

1.2.1.1.2. Using IAM, you can create and manage AWS users and groups, and use permissions to allow and deny their access to AWS resources.

1.2.1.2. Key Terminology

1.2.1.2.1. Root Account

1.2.1.2.2. Users

1.2.1.2.3. Groups

1.2.1.2.4. Roles

1.2.1.2.5. Policies

1.2.1.3. Features

1.2.1.3.1. Centralised control of your AWS account

1.2.1.3.2. Shared Access to your AWS account

1.2.1.3.3. Granular Permissions

1.2.1.3.4. Multifactor Authentication

1.2.1.3.5. Identity Federation (including Active Directory, Facebook, Linkedin etc)

1.2.1.3.6. Provide temporary access for users/devices and services where necessary

1.2.1.3.7. Allows you to set up your own password rotation policy

1.2.1.3.8. Integrates with many different AWS services

1.2.1.3.9. Supports PCI DSS Compliance

1.2.1.4. Delegation

1.2.1.4.1. You can use roles to delegate access to users, applications, or services that don't normally have access to your AWS resources.

1.2.1.4.2. It is not a best practice to use IAM credentials for any production based application. It is always a good practice to use IAM Roles.

1.2.1.5. Cross-account

1.2.1.5.1. Cross-account IAM roles allow customers to securely grant access to AWS resources in their account to a third party, like an APN Partner, while retaining the ability to control and audit who is accessing their AWS account.

1.2.1.5.2. Cross-account roles reduce the amount of sensitive information APN Partners need to store for their customers, so that they can focus on their product instead of managing keys.

1.2.1.5.3. Cross-account Role is the right tool to comply with best practices and simplify the credential management, as it gets rid of the need for credential management for third parties.

1.2.1.6. My Exam Tips

1.2.1.6.1. IAM Roles for Tasks

1.2.1.6.2. API Gateway,

1.2.1.6.3. To ensure secure access to AWS resources from EC2 Instances, always assign a role to the EC2 Instance.

1.2.2. AWS Active Directory (AD)

1.2.2.1. AWS Directory Service/ AWS Managed Microsoft AD

1.2.2.1.1. AWS Directory Service for Microsoft Active Directory, also known as AWS Managed Microsoft AD, enables your directory-aware workloads and AWS resources to use managed Active Directory in the AWS Cloud.

1.2.2.1.2. You can use standard Active Directory administration tools and take advantage of built-in Active Directory features, such as Group Policy and single sign-on (SSO).

1.2.2.1.3. With AWS Managed Microsoft AD, you can easily join Amazon EC2 and Amazon RDS for SQL Server instances to your domain, and use AWS Enterprise IT applications such as Amazon WorkSpaces with Active Directory users and groups.

1.2.2.1.4. AWS Managed Microsoft AD is built on actual Microsoft Active Directory and does NOT require you to synchronize or replicate data from your existing Active Directory to the cloud.

1.2.2.1.5. AWS Managed Microsoft AD can be used as the Active Directory over VPN or Direct Connect

1.2.2.2. What is AWS Directory Service

1.2.2.2.1. Simple AD provides a subset of the features offered by AWS Managed Microsoft AD, including the ability to manage user accounts and group memberships, create and apply group policies, securely connect to Amazon EC2 instances, and provide Kerberos-based single sign-on (SSO)

1.2.2.2.2. However, note that Simple AD does not support features such as trust relationships with other domains, Active Directory Administrative Center, PowerShell support ...etc

1.2.2.3. AD Connector

1.2.2.3.1. AD Connector is a directory gateway with which you can redirect directory requests to your on-premises Microsoft Active Directory without caching any information in the cloud.

1.2.2.3.2. AD Connector offers the following benefits:

1.2.2.3.3. My Exam Tips

1.2.3. Amazon Cognito

1.2.3.1. Amazon Cognito lets you add user sign-up, sign-in, and access control to your web and mobile apps quickly and easily.

1.2.3.2. Amazon Cognito scales to millions of users and supports sign-in with social identity providers, such as Facebook, Google, and Amazon, and enterprise identity providers via SAML 2.0.

1.2.3.3. Amazon Cognito User Pools

1.2.3.3.1. Intro

1.2.3.3.2. Open ID Connect Providers (Identity Pools)

1.2.4. AWS Single Sign-On (SSO)

1.2.4.1. Intro

1.2.4.1.1. AWS Single Sign-On (SSO) is a cloud SSO service that makes it easy to centrally manage SSO access to multiple AWS accounts and business applications.

1.2.4.1.2. Enable a highly available SSO service without the upfront investment and on-going maintenance costs of operating your own SSO infrastructure.

1.2.4.1.3. Easily manage SSO access and user permissions to all of your accounts in AWS Organizations centrally.

1.2.4.2. Verification Types

1.2.4.2.1. Context Two-step Verification

1.2.4.2.2. Always-on Verification

1.2.4.3. Permission Sets

1.2.4.3.1. A permission set is a collection of administrator-defined policies that AWS SSO uses to determine a user's effective permissions to access a given AWS account.

1.2.4.3.2. Permission sets can contain either AWS managed policies or custom policies that are stored in AWS SSO. Policies are essentially documents that act as containers for one or more permission statements.

1.2.4.3.3. These statements represent individual access controls (allow or deny) for various tasks that determine what tasks users can or cannot perform within the AWS account.

1.3. Security

1.3.1. AWS Certificate Manager (SSL/TLS)

1.3.1.1. AWS Certificate Manager is a service that lets you easily provision, manage, and deploy public and private Secure Sockets Layer/Transport Layer Security (SSL/TLS) certificates for use with AWS services and your internal connected resources.

1.3.2. AWS Key Management Service (KMS)

1.3.2.1. AWS Key Management Service (KMS) makes it easy for you to create and manage keys and control the use of encryption across a wide range of AWS services and in your applications.

1.3.2.2. AWS KMS is integrated with other AWS services including AWS EBS, S3, Redshift, RDS, and others, to make it simple to encrypt your data with encryption keys that you manage.

1.3.2.3. Customer Master Keys (CMKs)

1.3.2.3.1. The primary resources in AWS KMS are customer master keys (CMKs).

1.3.2.3.2. You can use a CMK to encrypt and decrypt up to 4 KB (4096 bytes) of data. Typically, you use CMKs to generate, encrypt, and decrypt the data keys that you use outside of AWS KMS to encrypt your data. This strategy is known as envelope encryption.

1.3.2.3.3. Customer managed CMK will generate plain text Data Key & encrypted Data Keys. documents will be encrypted using these plain text Data Keys. After encryption , plain text Data keys needs to be deleted to avoid any inappropriate use & encrypted Data Keys along with encrypted Data is stored in S3 buckets.

1.3.2.3.4. While decryption, encrypted Data Key is decrypted using Customer CMK into plain text Key which is further used to decrypt documents. This Envelope Encryption ensures that Data is protected by Data key which is further protected by another key.

1.3.2.4. My Exam Tips

1.3.2.4.1. Used to encrypt data at rest (i.e. EBS Volumes, S3)

1.3.2.4.2. KMS is offered in several regions, but keys are not transferrable out of the region they were created in (keys are region specific)

1.3.2.4.3. KMS will rotate keys annually and use the appropriate keys to perform cryptographic operations

1.3.2.4.4. in AWS, Data can be encrypted by KMS keys or keys provided by the my company

1.3.3. AWS Security Token Service (STS)

1.3.3.1. The AWS Security Token Service (STS) is a web service that enables you to request temporary, limited-privilege credentials for AWS Identity and Access Management (IAM) users or for users that you authenticate (federated users).

1.3.4. AWS Web Application Firewall (WAF)

1.3.4.1. Intro

1.3.4.1.1. AWS WAF is a web application firewall that helps protect your web applications from common web exploits that could affect application availability, compromise security, or consume excessive resources.

1.3.4.1.2. AWS WAF gives you control over which traffic to allow or block to your web applications by defining customizable web security rules.

1.3.4.1.3. You can use AWS WAF to create custom rules that block common attack patterns, such as SQL injection or cross-site scripting, and rules that are designed for your specific application.

1.3.4.1.4. New rules can be deployed within minutes, letting you respond quickly to changing traffic patterns. Also, AWS WAF includes a full-featured API that you can use to automate the creation, deployment, and maintenance of web security rules. With AWS WAF you pay only for what you use.

1.3.4.2. Pricing

1.3.4.2.1. AWS WAF pricing is based on how many rules you deploy and how many web requests your web application receives. There are no upfront commitments. You can deploy AWS WAF on either Amazon CloudFront as part of your CDN solution, the Application Load Balancer (ALB) that fronts your web servers or origin servers running on EC2, or Amazon API Gateway for your APIs.

1.3.5. AWS CloudHSM (Hardware Security Module)

1.3.5.1. AWS CloudHSM is a cloud-based hardware security module (HSM) that enables you to easily generate and use your own encryption keys on the AWS Cloud.

1.3.5.2. CloudHSM is standards-compliant and enables you to export all of your keys to most other commercially-available HSMs, subject to your configurations.

1.3.5.3. It is a fully-managed service that automates time-consuming administrative tasks for you, such as hardware provisioning, software patching, high-availability, and backups.

1.3.5.4. CloudHSM also enables you to scale quickly by adding and removing HSM capacity on-demand, with no up-front costs.

1.4. Storage

1.4.1. S3

1.4.1.1. The Basics

1.4.1.1.1. Intro

1.4.1.1.2. Features

1.4.1.1.3. Data Consistency Model For S3

1.4.1.1.4. S3 - Charges charged for

1.4.1.2. Buckets

1.4.1.2.1. Files are stored in Buckets.

1.4.1.2.2. S3 Buckets is a universal namespace. That is, names must be unique globally. https://s3-eu-west-1.amazonaws.com/acloudguru

1.4.1.3. S3 - Storage Tiers/Classes

1.4.1.3.1. S3 Standard

1.4.1.3.2. S3 - IA (Infrequently Accessed)

1.4.1.3.3. S3 - IA One Zone

1.4.1.4. S3 Glacier

1.4.1.4.1. Intro

1.4.1.4.2. Types

1.4.1.5. Lifecycle Management

1.4.1.5.1. To manage your objects so that they are stored cost effectively throughout their lifecycle, configure their lifecycle.

1.4.1.5.2. A lifecycle configuration is a set of rules that define actions that Amazon S3 applies to a group of objects. There are two types of actions:

1.4.1.5.3. Examples

1.4.1.6. Security

1.4.1.6.1. By default, all newly created buckets are PRIVATE

1.4.1.6.2. You can setup access control to your buckets using;

1.4.1.6.3. S3 buckets can be configured to create access logs which log all requests made to the S3 bucket. This can be done to another bucket.

1.4.1.7. Encryption

1.4.1.7.1. Server Side Encryption (At Rest)

1.4.1.7.2. In Transit

1.4.1.8. Cross-Region Replication (CRR)

1.4.1.8.1. Cross-region replication provides design for high availability and fault tolerance in case of a disaster

1.4.1.8.2. Cross-region replication enables automatic, asynchronous copying of objects across buckets in different AWS Regions.

1.4.1.8.3. Buckets configured for cross-region replication can be owned by the same AWS account or by different accounts.

1.4.1.8.4. In the minimum configuration, you provide the following:

1.4.1.8.5. Versioning must be enabled on both the source and destination buckets.

1.4.1.8.6. Files in an existing bucket are not replicated automatically. but all subsequent updated files will be replicated automatically.

1.4.1.8.7. Deleting individual versions or delete markers will not be replicated, Delete markers are replicated.

1.4.1.8.8. My Exam Tips

1.4.1.9. Configuring Amazon S3 Event Notifications

1.4.1.9.1. The Amazon S3 notification feature enables you to receive notifications when certain events happen in your bucket.

1.4.1.9.2. To enable notifications, you must first add a notification configuration identifying the events you want Amazon S3 to publish, and the destinations where you want Amazon S3 to send the event notifications.

1.4.1.9.3. You store this configuration in the notification subresource (see Bucket Configuration Options) associated with a bucket. Amazon S3 provides an API for you to manage this subresource.

1.4.1.10. Transfer Acceleration

1.4.1.10.1. S3 Transfer Acceleration utilises the CloudFront Edge Network to accelerate your uploads to S3.

1.4.1.10.2. Instead of uploading directly to your S3 bucket, you can use a distinct URL to upload directly to an edge location which will then transfer that file to S3.

1.4.1.10.3. When using Transfer Acceleration, additional data transfer charges may apply.

1.4.1.11. S3 Increased Request Rate

1.4.1.11.1. Amazon S3 now provides increased performance to support at least 3,500 requests per second to add data and 5,500 requests per second to retrieve data, which can save significant processing time for no additional charge.

1.4.1.11.2. Each S3 prefix can support these request rates, making it simple to increase performance significantly.

1.4.1.11.3. Amazon S3’s support for parallel requests means you can scale your S3 performance by the factor of your compute cluster, without making any customizations to your application.

1.4.1.11.4. Performance scales per prefix, so you can use as many prefixes as you need in parallel to achieve the required throughput. There are no limits to the number of prefixes.

1.4.1.11.5. More info

1.4.1.12. MFA Delete

1.4.1.12.1. You can optionally add another layer of security by configuring a bucket to enable MFA Delete, which requires additional authentication for either of the following operations:

1.4.1.12.2. Versioning's MFA Delete uses multi-factor authentication, can be used to provide an additional layer of security.

1.4.1.13. Uploading Objects to Buckets Using Presigned URLs

1.4.1.13.1. A presigned URL gives you access to the object identified in the URL, provided that the creator of the presigned URL has permissions to access that object.

1.4.1.13.2. That is, if you receive a presigned URL to upload an object, you can upload the object only if the creator of the presigned URL has the necessary permissions to upload that object.

1.4.1.13.3. All objects and buckets by default are private. The presigned URLs are useful if you want your user/customer to be able to upload a specific object to your bucket, but you don't require them to have AWS security credentials or permissions.

1.4.1.13.4. When you create a presigned URL, you must provide your security credentials and then specify a bucket name, an object key, an HTTP method (PUT for uploading objects), and an expiration date and time. The presigned URLs are valid only for the specified duration.

1.4.1.14. My Exam Tips

1.4.1.14.1. Hosting a Static Website on Amazon S3

1.4.1.14.2. Hosting a Static Website on S3 using Your Own Domain

1.4.2. Storage Gateway

1.4.2.1. Introduction

1.4.2.1.1. AWS Storage Gateway is a service that connects an on-premises software appliance with cloud-based storage to provide seamless and secure integration between

1.4.2.1.2. The service enables you to securely store data to the AWS cloud for scalable and cost-effective storage.

1.4.2.1.3. AWS Storage Gateway's software appliance is available for download as a virtual machine (VM) image that you install on a host in your datacenter.

1.4.2.1.4. Storage Gateway supports either

1.4.2.1.5. Once you've installed your gateway and associated it with your AWS account through the activation process, you can use the AWS Management Console to create the storage gateway option that is right for you.

1.4.2.2. Types

1.4.2.2.1. File Gateway Network File System (NFS)

1.4.2.2.2. Volumes Gateway/iSCSI (Stored/Cached)

1.4.2.2.3. Tape Gateway (VTL)

1.5. Compute

1.5.1. EC2

1.5.1.1. Intro

1.5.1.1.1. Amazon Elastic Compute Cloud (Amazon EC2) is a web service that provides secure, resizable compute capacity in the cloud. It is designed to make web-scale cloud computing easier for developers.

1.5.1.1.2. Amazon EC2 reduces the time required to obtain and boot new server instances to minutes, allowing you to quickly scale capacity, both up and down, as your computing requirements change.

1.5.1.1.3. Amazon EC2 changes the economics of computing by allowing you to pay only for capacity that you actually use.

1.5.1.1.4. Amazon EC2 provides developers the tools to build failure resilient applications and isolate them from common failure scenarios.

1.5.1.2. Pricing Options

1.5.1.2.1. On Demand

1.5.1.2.2. Spot

1.5.1.2.3. Reserved

1.5.1.2.4. Dedicated Hosts

1.5.1.3. EC2 Instance Types

1.5.1.3.1. Letters

1.5.1.3.2. Types

1.5.1.3.3. How to Remember

1.5.1.4. Amazon Machine Image (AMI)

1.5.1.4.1. Intro

1.5.1.4.2. You can select your AMI based on;

1.5.1.4.3. If you need an AMI across multiple regions, you have to copy the AMI across regions. Note that by default, AMIs that you have created will not be available across all regions.

1.5.1.5. EC2 Storage

1.5.1.5.1. EBS Volume

1.5.1.5.2. Elastic File System (EFS)

1.5.1.6. Instance Metadata

1.5.1.6.1. To retrieve list of variables

1.5.1.6.2. to retrieve specific metadata variable

1.5.1.7. EBS Snapshots

1.5.1.7.1. Snapshots on S3

1.5.1.7.2. Amazon Data Lifecycle Manager (DLM)

1.5.1.8. EC2 Placement Groups

1.5.1.8.1. Types

1.5.1.8.2. Exam Tips

1.5.1.9. My Exam Tips

1.5.1.9.1. You can create an AMI from the EC2 Instances and then copy them to another region. In case of a disaster, an EC2 Instance can be created from the AMI.

1.5.1.9.2. Termination Protection

1.5.1.9.3. EC2 runs only inside VPC, hence EC2 Traffic is monitored by VPC Flow Logs

1.5.1.9.4. EC2 Instance States

1.5.1.9.5. You can run up to 20 On-Demand EC2 for most of the instance. If you need more, you have to complete a requisition form and submit it to AWS.

1.5.2. Lambda Functions

1.5.2.1. Intro

1.5.2.1.1. AWS Lambda is a compute service where you can upload your code and create a Lambda function.

1.5.2.1.2. AWS Lambda takes care of provisioning and managing the servers that you use to run the code.

1.5.2.1.3. You don't have to worry about operating systems, patching, scaling, etc. You can use Lambda in the following ways.

1.5.2.1.4. Provides auto scaling

1.5.2.2. Lambda Triggers

1.5.2.2.1. API Gateway

1.5.2.2.2. AWS JOT

1.5.2.2.3. Alexa Skills Kit

1.5.2.2.4. Alexa Smart Home

1.5.2.2.5. CloudFront

1.5.2.2.6. CloudWatch Events

1.5.2.2.7. CloudWatch Logs

1.5.2.2.8. CodeCommit

1.5.2.2.9. Cognito Sync Trigger

1.5.2.2.10. DynamoDB

1.5.2.2.11. Kinesis

1.5.2.2.12. SNS

1.5.2.3. Supported Languages

1.5.2.3.1. Node.js

1.5.2.3.2. Python

1.5.2.3.3. Java

1.5.2.3.4. C#

1.5.2.4. Pricing

1.5.2.4.1. Number of requests

1.5.2.4.2. Duration

1.5.2.4.3. Memory

1.5.2.4.4. Additional Charges

1.5.2.5. Lambda - Exam Tips

1.5.2.5.1. Lambda scales out (not up) automatically

1.5.2.5.2. Lambda functions are independent, 1 event = 1 function

1.5.2.5.3. Lambda is serverless

1.5.2.5.4. Lambda functions can trigger other lambda functions, 1 event can = x functions if functions trigger other functions

1.5.2.5.5. Architectures can get extremely complicated, AWS X-ray allows you to debug what is happening

1.5.2.5.6. Lambda can do things globally, you can use it to back up S3 buckets to other S3 buckets etc

1.5.2.6. Environment variables

1.5.2.6.1. Environment variables for Lambda functions enable you to dynamically pass settings to your function code and libraries, without making changes to your code.

1.5.2.6.2. Environment variables are key-value pairs that you create and modify as part of your function configuration, using either the AWS Lambda Console, the AWS Lambda CLI or the AWS Lambda SDK.

1.5.2.6.3. AWS Lambda then makes these key value pairs available to your Lambda function code using standard APIs supported by the language, like process.env for Node.js functions.

1.5.2.7. My Exam Tips

1.5.2.7.1. to ensure that the Lambda function has access to Amazon Aurora

1.5.3. Contaners Services

1.5.3.1. Amazon ECS (Docker)

1.5.3.1.1. Amazon Elastic Container Service (Amazon ECS) is a highly scalable, fast, container management service that makes it easy to run, stop, and manage Docker containers on a cluster.

1.5.3.1.2. Amazon ECS lets you launch and stop container-based applications with simple API calls, allows you to get the state of your cluster from a centralized service, and gives you access to many familiar Amazon EC2 features.

1.5.3.1.3. Tasks

1.5.3.1.4. Batch Job Workloads

1.5.3.1.5. Service Auto Scaling

1.5.3.2. Amazon Elastic Container Registry (ECR)

1.5.3.2.1. is a fully-managed Docker container registry that makes it easy for developers to store, manage, and deploy Docker container images.

1.5.3.2.2. Amazon ECR is integrated with Amazon Elastic Container Service (ECS), simplifying your development to production workflow.

1.5.3.2.3. Amazon ECR eliminates the need to operate your own container repositories or worry about scaling the underlying infrastructure.

1.5.3.2.4. Amazon ECR hosts your images in a highly available and scalable architecture, allowing you to reliably deploy containers for your applications.

1.5.3.2.5. Integration with AWS Identity and Access Management (IAM) provides resource-level control of each repository.

1.5.3.2.6. With Amazon ECR, there are no upfront fees or commitments. You pay only for the amount of data you store in your repositories and data transferred to the Internet

1.5.3.3. AWS Fargate

1.5.3.3.1. AWS Fargate is a compute engine for Amazon ECS that allows you to run containers without having to manage servers or clusters

1.5.3.4. Amazon Elastic Container Service for Kubernetes (EKS)

1.5.3.4.1. makes it easy to deploy, manage, and scale containerized applications using Kubernetes on AWS.

1.5.3.4.2. Amazon EKS runs the Kubernetes management infrastructure for you across multiple AWS availability zones to eliminate a single point of failure.

1.5.3.4.3. Amazon EKS is certified Kubernetes conformant so you can use existing tooling and plugins from partners and the Kubernetes community.

1.5.3.4.4. Applications running on any standard Kubernetes environment are fully compatible and can be easily migrated to Amazon EKS.

1.5.4. AWS Batch

1.5.4.1. AWS Batch enables developers, scientists, and engineers to easily and efficiently run hundreds of thousands of batch computing jobs on AWS.

1.5.4.2. AWS Batch dynamically provisions the optimal quantity and type of compute resources (e.g., CPU or memory optimized instances) based on the volume and specific resource requirements of the batch jobs submitted.

1.5.4.3. With AWS Batch, there is no need to install and manage batch computing software or server clusters that you use to run your jobs, allowing you to focus on analyzing results and solving problems.

1.5.4.4. AWS Batch plans, schedules, and executes your batch computing workloads across the full range of AWS compute services and features, such as Amazon EC2 and Spot Instances.

1.5.5. AWS Auto Scaling

1.5.5.1. Provides the following Benefits

1.5.5.1.1. Better fault tolerance

1.5.5.1.2. Better availability

1.5.5.1.3. Scalability

1.5.5.2. EC2 Autoscaling Groups

1.5.5.2.1. EC2 Auto Scaling helps you ensure that you have the correct number of EC2 instances available to handle the load for your application.

1.5.5.2.2. You create collections of EC2 instances, called Auto Scaling groups. You can specify the minimum number of instances in each Auto Scaling group, and Amazon EC2 Auto Scaling ensures that your group never goes below this size.

1.5.5.2.3. You can specify the maximum number of instances in each Auto Scaling group, and Amazon EC2 Auto Scaling ensures that your group never goes above this size.

1.5.5.2.4. Scaling Cooldown

1.5.5.3. Scaling Options

1.5.5.3.1. Scale based on demand

1.5.5.3.2. Scale based on a schedule

1.5.5.3.3. Manual scaling

1.5.5.4. Auto Scaling policy

1.5.5.4.1. Metric

1.5.5.4.2. If auto Scaling group's scale up policy has not yet been reached., instances can reach 100% CPU Utilization without scaling up

1.5.5.5. My Exam Tips

1.5.5.5.1. If your scaling events are not based on the right metrics and do not have the right threshold defined, then the scaling will not occur as you want it to happen.

1.5.6. My Exam Tips

1.5.6.1. The public IP address of an EC2 Instance is released after the instance is stopped and started

1.5.6.2. The following would be considered important when considering the specification for the components of the application architecture

1.5.6.2.1. Determine the required I/O operations

1.5.6.2.2. Determining the MINIMUM MEMORY requirements for an application

1.6. Networking & Content Delivery

1.6.1. Cloud Front CDN

1.6.1.1. Intro

1.6.1.1.1. Amazon CloudFront is a fast content delivery network (CDN) service that securely delivers DATA, VIDEOS, APPLICATIONS, and APIs to customers globally with low latency, high transfer speeds, all within a developer-friendly environment.

1.6.1.1.2. Can be used to deliver your entire website, including dynamic, static, streaming, and interactive content using a global network of edge locations.

1.6.1.1.3. Requests for your content are automatically routed to the nearestedge location, so content is delivered with the best possible

1.6.1.2. Key Terminology

1.6.1.2.1. Edge Location

1.6.1.2.2. Distribution

1.6.1.2.3. Origin

1.6.1.2.4. Exam Tips

1.6.1.3. A content delivery network (CDN) is a system of distributed servers (network) that deliver webpages and other web content to a user based on

1.6.1.3.1. the geographic locations of the user,

1.6.1.3.2. the origin of the webpage

1.6.1.3.3. content delivery server.

1.6.1.4. Amazon CloudFront is optimized to work with other AWS Services i.e.

1.6.1.4.1. Amazon Web Services, like S3

1.6.1.4.2. Amazon Elastic Compute Cloud (Amazon EC2),

1.6.1.4.3. Amazon Elastic Load Balancing,

1.6.1.4.4. Amazon Route 53.

1.6.1.4.5. Amazon CloudFront also works seamlessly with any non-AWS origin server, which stores the original, definitive versions of your files.

1.6.1.5. Origin Access Identity (OAI)

1.6.1.5.1. If you're using an Amazon S3 bucket as the origin for a CloudFront distribution, you can either allow everyone to have access to the files there, or you can restrict access using OAI

1.6.1.6. Caching Content Based on Query String Parameters

1.6.1.6.1. Only supports Web distribution not RTMP

1.6.1.6.2. Parameter names & values used in query string are CASE SENSITIVE.

1.6.1.6.3. Its recommended to cache based upon parameters which has higher probability of returning different versions.

1.6.1.7. My Exam Tips

1.6.1.7.1. Increase the cache expiration time to enhance performance

1.6.2. VPC

1.6.2.1. Intro

1.6.2.1.1. Amazon Virtual Private Cloud (Amazon VPC) lets you provision a logically isolated section of the Amazon Web Services (AWS) Cloud where you can launch AWS resources in a virtual network that you define.

1.6.2.1.2. For example, you can create a public-facing subnet for your webservers that has access to the Internet, and place your backend systems such as databases or application servers in a private- facing subnet with no Internet access.

1.6.2.1.3. You have complete control over your virtual networking environment, including

1.6.2.1.4. You can leverage multiple layers of security, including security groups and network access control lists, to help control access to Amazon EC2 instances in each subnet.

1.6.2.1.5. Additionally, you can create a Hardware Virtual Private Network (VPN) connection between your corporate datacenter and your VPC and leverage the AWS cloud as an extension of your corporate datacenter.

1.6.2.1.6. A VPC spans all the Availability Zones in the region (Not multiple regions).

1.6.2.1.7. After creating a VPC, you can add one or more subnets in each Availability Zone.

1.6.2.1.8. What can you do with a VPC?

1.6.2.1.9. Default VPC vs Custom VPC

1.6.2.2. Key Terminology

1.6.2.2.1. Subnets

1.6.2.2.2. Security Groups

1.6.2.2.3. Network ACLs

1.6.2.2.4. Network ACL vs Security Groups

1.6.2.2.5. Route Tables

1.6.2.2.6. CIDR

1.6.2.3. VPC Peering

1.6.2.3.1. Intro

1.6.2.3.2. Multiple VPC Peering Connections

1.6.2.3.3. You cannot create a VPC peering connection between VPCs with matching or overlapping IPv4 CIDR blocks.

1.6.2.4. VPC Endpoints

1.6.2.4.1. A VPC endpoint enables you to privately connect your VPC to supported AWS services and VPC endpoint services powered by PrivateLink without requiring:

1.6.2.4.2. Endpoints are virtual devices. They are horizontally scaled, redundant, and highly available VPC components that allow communication between instances in your VPC and services without imposing availability risks or bandwidth constraints on your network traffic.

1.6.2.4.3. Before VPC EndPoints

1.6.2.4.4. After VPC EndPoints

1.6.2.5. VPC Flow Logs

1.6.2.5.1. VPC Flow Logs is a feature that enables you to capture information about the IP traffic going to and from network interfaces in your VPC.

1.6.2.5.2. Flow log data is stored using Amazon CloudWatch Logs. After you've created a flow log, you can view and retrieve its data in Amazon CloudWatch Logs.

1.6.2.5.3. Flow logs can be created at 3 levels;

1.6.2.5.4. VPC Flow Logs Exam Tips

1.6.2.6. AWS VPN CloudHub

1.6.2.6.1. You can securely communicate from one site to another using the AWS VPN CloudHub. The AWS VPN CloudHub operates on a simple hub-and-spoke model that you can use with or without a VPC.

1.6.2.6.2. Use this design if you have multiple branch offices and existing internet connections and would like to implement a convenient, potentially low cost hub-and-spoke model for primary or backup connectivity between these remote offices.

1.6.2.6.3. The following figure depicts the AWS VPN CloudHub architecture, with blue dashed lines indicating network traffic between remote sites being routed over their AWS VPN connections.

1.6.2.7. Gateways

1.6.2.7.1. NAT Gateways

1.6.2.7.2. Bastion Hosts

1.6.2.7.3. Internet Gateways

1.6.2.8. VPC Exam Tıps

1.6.2.8.1. Think of a VPC as a logical datacenter in AWS.

1.6.2.8.2. Consists of

1.6.2.8.3. 1 Subnet = 1 Availability Zone

1.6.2.8.4. Statefulness

1.6.2.8.5. NO TRANSITIVE PEERING

1.6.3. ELB

1.6.3.1. Elastic Load Balancing automatically distributes incoming application traffic across multiple targets such as:

1.6.3.1.1. Amazon EC2 instances

1.6.3.1.2. Containers

1.6.3.1.3. IP addresses

1.6.3.1.4. Lambda functions.

1.6.3.2. It can handle the varying load of your application traffic in a single Availability Zone or across multiple Availability Zones.

1.6.3.3. Health Checks

1.6.3.3.1. To discover the availability of your EC2 instances, a load balancer periodically sends pings, attempts connections, or sends requests to test the EC2 instances.

1.6.3.3.2. These tests are called health checks. The status of the instances that are healthy at the time of the health check is InService. The status of any instances that are unhealthy at the time of the health check is OutOfService.

1.6.3.3.3. The load balancer performs health checks on all registered instances, whether the instance is in a healthy state or an unhealthy state. The load balancer routes requests only to the healthy instances.

1.6.3.3.4. When the load balancer determines that an instance is unhealthy, it stops routing requests to that instance. The load balancer resumes routing requests to the instance when it has been restored to a healthy state.

1.6.3.4. Types

1.6.3.4.1. Application Load Balancer

1.6.3.4.2. Network Load Balancer

1.6.3.4.3. Classic Load Balancer

1.6.3.5. My Exam Tips

1.6.3.5.1. Attaching a Load Balancer to Your Auto Scaling Group

1.6.3.5.2. ELB needs to be placed in the public subnet to allow access from the Internet

1.6.3.5.3. ELB distributes incoming application traffic across multiple EC2 Instances in multiple Availability Zones NOT MULTIPLE REGIONS !

1.6.3.5.4. ELB is for FAULT TOLERANCE, but cannot help completely in disaster recovery for the EC2 Instances.

1.6.3.5.5. It can handle the varying load of your application traffic in a SINGLE AVAILABILITY ZONE or across MULTIPLE AVAILABILITY ZONES in one region and NOT across multiple regions.

1.6.4. Route 53

1.6.4.1. Intro

1.6.4.1.1. Amazon Route 53 is a highly available and scalable cloud Domain Name System (DNS) web service.

1.6.4.1.2. It is designed to give developers and businesses an extremely reliable and cost effective way to route end users to Internet applications by translating names like www.example.com into the numeric IP addresses like 192.0.2.1 that computers use to connect to each other.

1.6.4.2. Routing Policies

1.6.4.2.1. Failover Routing

1.6.4.2.2. Simple Routing

1.6.4.2.3. Weighted Routing

1.6.4.2.4. Latency-based Routing

1.6.4.2.5. Geolocation Routing

1.6.4.2.6. Multivalue Answer Routing

1.6.4.3. Uses

1.6.4.3.1. Connects user requests to infrastructure running in AWS – such as

1.6.4.3.2. You can use Amazon Route 53 to configure DNS HEALTH CHECKS to route traffic to healthy endpoints or to independently monitor the health of your application and its endpoints.

1.6.4.3.3. Amazon Route 53 Traffic Flow makes it easy for you to manage traffic globally through a variety of routing types, including Latency Based Routing, Geo DNS, Geoproximity, and Weighted Round Robin—all of which can be combined with DNS Failover in order to enable a variety of low-latency, fault-tolerant architectures

1.6.4.4. My Exam Notes

1.6.4.4.1. While ordinary Amazon Route 53 records are standard DNS records, alias records provide a Route 53–specific extension to DNS functionality.

1.6.5. ELB vs Route53

1.6.5.1. 1

1.6.5.1.1. In summary, I believe ELBs are intended to load balance across EC2 instances in a 'single' region.

1.6.5.1.2. Whereas DNS load-balancing (Route 53) is intended to help balance traffic 'across' regions. Route53 policies like geolocation may help direct traffic to preferred regions, then ELBs route between instances within one region.

1.6.5.1.3. another difference is that DNS-based routing (e.g. Route 53) only changes the address that your clients' requests resolve to. On the other hand, an ELB actually reroutes traffic.

1.6.6. API Gateway

1.6.6.1. Intro

1.6.6.1.1. Amazon API Gateway is a fully managed service that makes it easy for developers to publish, maintain, monitor, and secure APIs at any scale.

1.6.6.1.2. With a few clicks in the AWS Management Console, you can create an API that acts as a "front door" for applications to access data, business logic, or functionality from your back-end services, such as

1.6.6.2. APIs Caching

1.6.6.2.1. You can enable API caching in Amazon API Gateway to cache your endpoint's response. With caching, you can reduce the number of calls made to your endpoint and also improve the latency of the requests to your API.

1.6.6.2.2. When you enable caching for a stage, API Gateway caches responses from your endpoint for a specified time-to-live (TTL) period, in seconds. API Gateway then responds to the request by looking up the endpoint response from the cache instead of making a request to your endpoint.

1.6.6.3. Features

1.6.6.3.1. Low Cost & Efficient

1.6.6.3.2. Scales Effortlessly

1.6.6.3.3. You can Throttle Requests to prevent attacks

1.6.6.3.4. Connect to CloudWatch to log all requests

1.6.6.4. Cross Origin Resource Sharing (CORS)

1.6.6.4.1. Is a mechanism that allows restricted resources (e.g. fonts) on a web page to be requested from another domain outside the domain from which the first resource was served.

1.6.6.4.2. is a security feature of modern web browsers. It enables web browsers to negotiate which domains can make requests of external websites or services.

1.6.6.4.3. Only these methods are supported: GET, PUT, POST, DELETE, HEAD

1.6.7. IP Addresses

1.6.7.1. Elastic IP Addresses

1.6.7.1.1. An Elastic IP address is a static IPv4 address designed for dynamic cloud computing. An Elastic IP address is associated with your AWS account. With an Elastic IP address, you can mask the failure of an instance or software by rapidly remapping the address to another instance in your account.

1.6.7.1.2. is reachable from the internet. If your instance does not have a public IPv4 address, you can associate an Elastic IP address with your instance to enable communication with the internet; for example, to connect to your instance from your local computer.

1.6.7.2. Private IPv4 Addresses

1.6.7.2.1. A private IPv4 address is an IP address that's not reachable over the Internet. You can use private IPv4 addresses for communication between instances in the same VPC.

1.6.7.3. Public IPv4 Addresses

1.6.7.3.1. A public IP address is an IPv4 address that's reachable from the Internet. You can use public addresses for communication between your instances and the Internet.

1.6.8. Site-to-Site VPN VPN Connection

1.6.8.1. Intro

1.6.8.1.1. Although the term VPN connection is a general term, in the documentation, a VPN connection refers to the connection between your VPC and your own on-premises network.

1.6.8.1.2. You can enable access to your remote network from your VPC by

1.6.8.2. Components

1.6.8.2.1. Virtual Private Gateway (VPG)

1.6.8.2.2. Customer Gateway

1.6.8.3. Key Terminology

1.6.8.3.1. Border Gateway Protocol (BGP)

1.6.8.3.2. Autonomous System Number (ASN)

1.6.8.4. Data can be encrypted in transit, in contrast to Direct Connect which does not allow data encryption

1.6.9. AWS Direct Connect

1.6.9.1. Intro

1.6.9.1.1. AWS Direct Connect is a cloud service solution that makes it easy to establish a dedicated network connection (THAT DOES NOT INVOLVE INTERNET) from your premises to AWS.

1.6.9.1.2. AWS Direct Connect is a network service that provides an alternative to using the Internet to connect customer's on premise sites to AWS.

1.6.9.1.3. Using AWS Direct Connect, you can establish private connectivity between AWS and your datacenter, office, or colocation environment, which in many cases can reduce your network costs, increase bandwidth throughput, and provide a more consistent network experience than Internet-based connections.

1.6.9.2. Benefits

1.6.9.2.1. Reduces Your Bandwidth Costs

1.6.9.2.2. Consistent Network Performance

1.6.9.2.3. Compatible with All Aws Services

1.6.9.2.4. Private Connectivity to Your Amazon Vpc

1.6.9.2.5. Elastic and Simple

1.6.9.3. Does not encrypt traffic in connections between AWS VPC’s and the On-premises network

1.6.10. AWS PrivateLink

1.6.10.1. AWS PrivateLink simplifies the security of data shared with cloud-based applications by eliminating the exposure of data to the public Internet.

1.6.10.2. AWS PrivateLink provides private connectivity between VPCs, AWS services, and on-premises applications, securely on the Amazon network.

1.6.10.3. AWS PrivateLink makes it easy to connect services across different accounts and VPCs to significantly simplify the network architecture.

1.6.11. DNS

1.6.11.1. Key Terminology

1.6.11.1.1. SOA Records

1.6.11.1.2. Domain Registrars

1.6.11.1.3. NS Records

1.6.11.1.4. A Records

1.6.11.1.5. CNAMES

1.6.11.1.6. Alias Records

1.6.11.1.7. TTL

1.6.11.2. Exam Tips

1.6.11.2.1. ELBs do not have pre-defined IPv4 addresses; you resolve to them using a DNS name.

1.6.11.2.2. Understand the difference between an Alias Record and a CNAME.

1.6.11.2.3. Given the choice, always choose an Alias Record over a CNAME.

1.6.11.2.4. Common DNS Types

1.7. Databases

1.7.1. Amazon RDS

1.7.1.1. Intro

1.7.1.1.1. Amazon Relational Database Service (RDS) makes it easy to set up, operate, and scale a relational database in the cloud.

1.7.1.1.2. It provides cost-efficient and resizable capacity while automating time-consuming administration tasks such as hardware provisioning, database setup, patching and backups.

1.7.1.1.3. It frees you to focus on your applications so you can give them the fast performance, high availability, security and compatibility they need.

1.7.1.2. Amazon RDS database engines

1.7.1.2.1. SQL Server

1.7.1.2.2. Oracle

1.7.1.2.3. PostgreSQL

1.7.1.2.4. MySQL

1.7.1.2.5. Aurora

1.7.1.2.6. MariaDB

1.7.1.3. RDS Multi-AZ

1.7.1.3.1. Intro

1.7.1.3.2. Multi-AZ Databases

1.7.1.3.3. My Exam Tips

1.7.1.4. Read Replicas

1.7.1.4.1. Intro

1.7.1.4.2. Read Replica Databases

1.7.1.4.3. Notes

1.7.1.5. DB Parameter Group

1.7.1.5.1. You manage your DB engine configuration through the use of parameters in a DB parameter group .

1.7.1.5.2. DB parameter groups act as a container for engine configuration values that are applied to one or more DB instances.

1.7.1.5.3. A default DB parameter group is created if you create a DB instance without specifying a customer-created DB parameter group.

1.7.1.5.4. Each default DB parameter group contains database engine defaults and Amazon RDS system defaults based on the engine, compute class, and allocated storage of the instance.

1.7.1.5.5. You cannot modify the parameter settings of a default DB parameter group; you must create your own DB parameter group to change parameter settings from their default value.

1.7.1.5.6. Note that not all DB engine parameters can be changed in a customer-created DB parameter group.

1.7.1.6. Automated Backups

1.7.1.6.1. You can back up the data on your Amazon EBS volumes to Amazon S3 by taking point-in-time snapshots.

1.7.1.6.2. Snapshots are incremental backups, which means that only the blocks on the device that have changed after your most recent snapshot are saved.

1.7.1.6.3. This minimizes the time required to create the snapshot and saves on storage costs by not duplicating data.

1.7.1.6.4. When you delete a snapshot, only the data unique to that snapshot is removed. Each snapshot contains all of the information needed to restore your data (from the moment when the snapshot was taken) to a new EBS volume.

1.7.1.6.5. Disabling automated backups disables point-in-time recovery. If you disable and then re-enable automated backups, you are only able to restore starting from the time you re-enabled automated backups.

1.7.1.7. My Exam Tips

1.7.1.7.1. Supports up to 16 TB of storage.

1.7.1.7.2. RDS is a transactional database works based on the OLTP, it's not intended to store analytical data coming out of Kinesis.

1.7.1.7.3. RDS Encryption

1.7.2. DynamoDB

1.7.2.1. Intro

1.7.2.1.1. Amazon DynamoDB is a fast and flexible NoSQL database service for all applications that need consistent, single-digit millisecond latency at any scale.

1.7.2.1.2. It is a fully managed database and supports both document and key-value data models.

1.7.2.1.3. Its flexible data model and reliable performance make it a great fit for

1.7.2.1.4. Stored on SSD storage

1.7.2.1.5. Spread Across 3 geographically distinct data centres

1.7.2.2. Consistent Reads Types

1.7.2.2.1. Strongly Consistent Reads

1.7.2.2.2. Eventual Consistent Reads

1.7.2.3. Pricing

1.7.2.3.1. Provisioned Throughput Capacity

1.7.2.3.2. Storage costs of $0.25Gb per month.

1.7.2.4. Capturing Table Activity with DynamoDB Stream

1.7.2.4.1. DynamoDB Streams enables solutions such as these, and many others. DynamoDB Streams captures a time-ordered sequence of item-level modifications in any DynamoDB table, and stores this information in a log for up to 24 hours.

1.7.2.4.2. Many applications can benefit from the ability to capture changes to items stored in a DynamoDB table, at the point in time when such changes occur.

1.7.2.4.3. Example use cases:

1.7.2.5. DynamoDB Auto Scaling

1.7.2.5.1. DynamoDB auto scaling uses the AWS Application Auto Scaling service to dynamically adjust provisioned throughput capacity on your behalf, in response to actual traffic patterns.

1.7.2.5.2. This enables a table or a global secondary index to increase its provisioned read and write capacity to handle sudden increases in traffic, without throttling.

1.7.2.5.3. When the workload decreases, Application Auto Scaling decreases the throughput so that you don't pay for unused provisioned capacity.

1.7.2.6. My Exam Tips

1.7.2.6.1. Amazon DynamoDB is schema-less, in that the data items in a table need not have the same attributes or even the same number of attributes.

1.7.2.6.2. The maximum item size in DynamoDB is 400KB.

1.7.2.6.3. Use Cases

1.7.2.7. Partition Key

1.7.2.7.1. A simple primary key, composed of one attribute known as the partition key.

1.7.2.7.2. Recommendations for partition-keys

1.7.3. Redshift

1.7.3.1. Intro

1.7.3.1.1. Amazon Redshift is a fast and powerful, fully managed, petabyte-scale data warehouse service in the cloud.

1.7.3.1.2. Customers can start small for just $0.25 per hour with no commitments or upfront costs and scale to a petabyte or more for $1,000 per terabyte per year, less than a tenth of most other data warehousing solutions.

1.7.3.2. Redshift Configuration

1.7.3.2.1. Single Node (160Gb)

1.7.3.2.2. Multi-Node (Cluster)

1.7.3.2.3. My Exam Tips

1.7.3.3. Features

1.7.3.3.1. Faster

1.7.3.3.2. Massively Parallel Processing (MPP)

1.7.3.3.3. Advanced Compression

1.7.3.3.4. Redshift Availability

1.7.3.3.5. Security

1.7.3.4. Pricing

1.7.3.4.1. Compute Node Hours

1.7.3.4.2. Backup

1.7.3.4.3. Data transfer (only within a VPC, not outside it)

1.7.3.5. Amazon Redshift Enhanced VPC Routing

1.7.3.5.1. By using Enhanced VPC Routing, you can use standard VPC features, such as

1.7.3.5.2. You use these features to tightly manage the flow of data between your Amazon Redshift cluster and other resources.

1.7.3.5.3. When you use Enhanced VPC Routing to route traffic through your VPC, you can also use VPC flow logs to monitor COPY and UNLOAD traffic.

1.7.3.6. Snapshots

1.7.3.6.1. You can take a manual snapshot any time. By default, manual snapshots are retained indefinitely, even after you delete your cluster. You can specify the retention period when you create a manual snapshot, or you can change the retention period by modifying the snapshot.

1.7.3.6.2. Manual snapshots are retained even after you delete your cluster. Because manual snapshots accrue storage charges, it’s important that you manually delete them if you no longer need them.

1.7.3.6.3. Cross-Region Snapshot

1.7.3.7. My Exam Tips

1.7.3.7.1. Redshift is not designed for high workloads. If you expect a high concurrency workload that generally involves reading and writing all of the columns for a small number of records at a Amazon RDS or Amazon, DynamoDB

1.7.4. Elasticache

1.7.4.1. Intro

1.7.4.1.1. ElastiCache is a web service that makes it easy to deploy, operate, and scale an in-memory cache in the cloud.

1.7.4.1.2. The service improves the performance of web applications by allowing you to retrieve information from fast, managed, in- memory caches, instead of relying entirely on slower disk-based databases.

1.7.4.1.3. Amazon ElastiCache can be used to significantly improve latency and throughput for many READ-HEAVY application workloads (such as social networking, gaming, media sharing and Q&A portals) or compute- intensive workloads (such as a recommendation engine).

1.7.4.1.4. Caching improves application performance by storing critical pieces of data in memory for low-latency access.

1.7.4.1.5. Cached information may include the results of I/O intensive database queries or the results of computationally-intensive calculations.

1.7.4.2. Types of Elasticache

1.7.4.2.1. Memcached

1.7.4.2.2. Redis

1.7.4.3. Elasticache Exam Tips

1.7.4.3.1. Typically you will be given a scenario where a particular database is under a lot of stress/load.

1.7.4.3.2. Redshift is a good answer if the reason your database is feeling stress is because management keep runnin OLAP transactions on it etc.

1.7.4.3.3. You may be asked which service you should use to alleviate this.

1.7.4.3.4. Elasticache is a good choice if your database is particularly read heavy and not prone to frequent changing.

1.7.4.4. Use Cases

1.7.4.4.1. Can be used in front of a database to cache the common queries issued against the database. This can reduce the overall load on the database.

1.7.4.4.2. Real-time transactions,

1.7.4.4.3. Chat

1.7.4.4.4. Cache

1.7.4.4.5. Session store

1.7.4.4.6. Bl and analytics

1.7.4.4.7. Gaming leaderboards

1.7.5. Aurora

1.7.5.1. Intro

1.7.5.1.1. Amazon Aurora (Aurora) is a fully managed relational database engine that's compatible with MySQL and PostgreSQL. it combines the speed and availability of high-end commercial databases with the simplicity and cost-effectiveness of open source databases.

1.7.5.1.2. Aurora can deliver up to five times the throughput of MySQL and up to three times the throughput of PostgreSQL without requiring changes to most of your existing applications.

1.7.5.1.3. Aurora has Read Replicas and Multi AZ features

1.7.5.2. Aurora Scaling

1.7.5.2.1. Start with 10 GB, Scales in 10 GB increments to 64TB (Storage Autoscaling)

1.7.5.2.2. Compute resources can scale up to

1.7.5.2.3. 2 copies of your data is contained in each availability zone, with minimum of 3 availability zones. 6 copies of your data.

1.7.5.2.4. Aurora is designed to transparently handle the loss of up to

1.7.5.2.5. Aurora storage is also self-healing. Data blocks and disks are continuously scanned for errors and repaired automatically.

1.7.5.3. Aurora Replicas

1.7.5.3.1. 2 Types of Replicas are available.

1.7.5.4. My Exam Tips

1.7.5.4.1. Aurora does support Schema changes.

1.7.5.4.2. Aurora supports up to 64TB of data.

1.7.6. Automated Backups

1.7.6.1. There are two different types of Backups for AWS

1.7.6.1.1. Automated Backups

1.7.6.1.2. Database Snapshots.

1.7.6.2. Restoring Backups

1.7.6.2.1. Whenever you restore either an Automatic Backup or a manual Snapshot, the restored version of the database will be a new RDS instance with a new DNS endpoint.

1.7.6.3. Encryption

1.7.6.3.1. Encryption at rest is supported for

1.7.6.3.2. Encryption is done using the AWS Key Management Service (KMS) service.

1.7.6.3.3. Once your RDS instance is encrypted, the data stored at rest in the underlying storage is encrypted, as are its automated backups, read replicas, and snapshots.

1.7.6.3.4. Encrypting Existing DB Instance

1.7.7. Amazon EMR (Bigdata)

1.7.7.1. Easily Run and Scale Apache Spark, Hadoop, HBase, Presto, Hive, and other Big Data Frameworks

1.7.7.2. Amazon EMR provides a managed Hadoop framework that makes it easy, fast, and cost-effective to process vast amounts of data across dynamically scalable Amazon EC2 instances.

1.7.7.3. You can also run other popular distributed frameworks such as Apache Spark, HBase, Presto, and Flink in EMR,

1.7.7.4. Use cases

1.7.7.4.1. Clickstream Analysis

1.7.7.4.2. Real-Time Analytics

1.7.7.4.3. Log Analysis

1.7.7.4.4. Extract Transform Load (ETL)

1.7.7.4.5. Predictive Analytics

1.7.7.4.6. Genomics

1.7.7.5. EMR is not for receiving the real time data from thousands of sources, EMR is mainly used for Hadoop ecosystem based data used for Big data analysis.

1.7.8. My Exam Tips

1.7.8.1. If you expect a high concurrency workload that generally involves reading and writing all of the columns for a small number of records at a time you should instead consider using Amazon RDS or Amazon DynamoDB

1.7.8.2. DynamoDB vs RDS

1.7.8.2.1. DynamoDB offers "push button" scaling, meaning that you can scale your database on the fly, without any down time.

1.7.8.2.2. RDS is not so easy and you usually have to use a bigger instance size or to add a read replica.

1.8. Analytics & Search

1.8.1. Kinesis

1.8.1.1. Intro

1.8.1.1.1. Amazon Kinesis is a platform on AWS to send your streaming data to.

1.8.1.1.2. Kinesis makes it easy to load and analyze streaming data, and also providing the ability for you to build your own custom applications for you business needs.

1.8.1.2. Key Terminology

1.8.1.2.1. Producer

1.8.1.2.2. Consumer

1.8.1.3. What is streaming data

1.8.1.3.1. Streaming Data is data that is generated continuously by thousands of data sources, which typically send in the data records simultaneously, and in small sizes (order of Kilobytes).

1.8.1.3.2. Examples

1.8.1.4. Kinesis Services

1.8.1.4.1. Kinesis Data Streams (KDS)

1.8.1.4.2. Kinesis Firehose

1.8.1.4.3. Kinesis Analytics

1.8.1.5. Exam Tips

1.8.1.5.1. Know the difference between Kinesis Streams and Kinesis Firehose. You will be given scenario questions and you must choose the most relevant service.

1.8.1.5.2. Understand what Kinesis Analytics is.

1.8.1.6. Kinesis Streams vs Kinesis Firehose

1.8.1.6.1. Amazon Kinesis Data Firehose can send data to: Amazon S3 Amazon Redshift Amazon Elasticsearch Service Splunk To do the same thing with Amazon Kinesis Data Streams, you would need to write an application that consumes data from the stream and then connects to the destination to store data. So, think of Firehose as a pre-configured streaming application with a few specific options. Anything outside of those options would require you to write your own code.

1.8.1.7. Use Cases

1.8.1.7.1. Build video analytics applications

1.8.1.7.2. Evolve from Batch to Real-time Analytics

1.8.1.7.3. Build Real-time Applications

1.8.1.7.4. Analyze IoT device data

1.8.1.8. Interface VPC Endpoints

1.8.1.8.1. You can use an interface VPC endpoint to keep traffic between your Amazon VPC and Kinesis Data Streams from leaving the Amazon network.

1.8.1.8.2. Interface VPC endpoints don't require an internet gateway, NAT device, VPN connection, or AWS Direct Connect connection.

1.8.1.8.3. Interface VPC endpoints are powered by AWS PrivateLink, an AWS technology that enables private communication between AWS services using an elastic network interface with private IPs in your Amazon VPC

1.8.2. Amazon QuickSight

1.8.2.1. Amazon QuickSight is a fast, cloud-powered business intelligence service that makes it easy to deliver insights to everyone in your organization.

1.8.2.2. As a fully managed service, QuickSight lets you easily create and publish interactive dashboards that include ML Insights.

1.8.2.3. Dashboards can then be accessed from any device, and embedded into your applications, portals, and websites.

1.8.2.4. With our Pay-per-Session pricing, QuickSight allows you to give everyone access to the data they need, while only paying for what you use.

1.8.3. AWS Glue (ETL)

1.8.3.1. AWS Glue is a fully managed extract, transform, and load (ETL) service that makes it easy for customers to prepare and load their data for analytics. You can create and run an ETL job with a few clicks in the AWS Management Console.

1.8.3.2. You simply point AWS Glue to your data stored on AWS, and AWS Glue discovers your data and stores the associated metadata (e.g. table definition and schema) in the AWS Glue Data Catalog. Once cataloged, your data is immediately searchable, queryable, and available for ETL.

1.8.3.3. Glue Data Catalog

1.8.3.3.1. The AWS Glue Data Catalog contains references to data that is used as sources and targets of your extract, transform, and load (ETL) jobs in AWS Glue. To create your data warehouse, you must catalog this data.

1.8.3.3.2. The AWS Glue Data Catalog is an index to the location, schema, and runtime metrics of your data. You use the information in the Data Catalog to create and monitor your ETL jobs.

1.8.3.3.3. Typically, you run a crawler to take inventory of the data in your data stores, but there are other ways to add metadata tables into your Data Catalog.

1.8.3.4. Job Bookmarks

1.8.3.4.1. AWS Glue tracks data that has already been processed during a previous run of an ETL job by persisting state information from the job run. This persisted state information is called a job bookmark.

1.8.3.4.2. Job bookmarks help AWS Glue maintain state information and prevent the reprocessing of old data. With job bookmarks, you can process new data when rerunning on a scheduled interval.

1.8.4. Amazon Athena (SQL, S3)

1.8.4.1. Amazon Athena is an interactive query service that makes it easy to analyze data in Amazon S3 using standard SQL. Athena is serverless, so there is no infrastructure to manage, and you pay only for the queries that you run.

1.8.4.2. Athena is easy to use. Simply point to your data in Amazon S3, define the schema, and start querying using standard SQL. Most results are delivered within seconds. With Athena, there’s no need for complex ETL jobs to prepare your data for analysis. This makes it easy for anyone with SQL skills to quickly analyze large-scale datasets.

1.8.4.3. pricing

1.8.4.3.1. With Amazon Athena, you only pay for the queries that you run. You are charged based on the amount of data scanned by each query. You can get significant cost savings and performance gains by compressing, partitioning, or converting your data to a columnar format, because each of those operations reduces the amount of data that Athena needs to scan to execute a query.

1.8.5. Amazon Elasticsearch

1.8.5.1. Amazon Elasticsearch Service is a fully managed service that makes it easy for you to deploy, secure, and operate Elasticsearch at scale with zero down time.

1.8.5.2. The service offers open-source Elasticsearch APIs,

1.8.6. Amazon CloudSearch

1.8.6.1. Amazon CloudSearch is a managed service in the AWS Cloud that makes it simple and cost-effective to set up, manage, and scale a search solution for your website or application.

1.9. Monitoring and Auditing

1.9.1. AWS Config

1.9.1.1. AWS Config is a service that enables you to assess, audit, and evaluate the configurations of your AWS resources.

1.9.1.2. Config continuously monitors and records your AWS resource configurations and allows you to automate the evaluation of recorded configurations against desired configurations.

1.9.1.3. With Config, you can achieve the followings:

1.9.1.3.1. You can review changes in configurations and relationships between AWS resources,

1.9.1.3.2. Dive into detailed resource configuration histories,

1.9.1.3.3. Determine your overall compliance against the configurations specified in your internal guidelines.

1.9.1.4. This enables you to simplify compliance auditing, security analysis, change management, and operational troubleshooting.

1.9.2. AWS CloudTrail

1.9.2.1. Intro

1.9.2.1.1. AWS CloudTrail is a service that enables governance, compliance, operational and risk auditing of your AWS account.

1.9.2.1.2. With CloudTrail, you can log, continuously monitor, and retain account activity related to actions across your AWS infrastructure.

1.9.2.1.3. CloudTrail provides event history of your AWS account activity, including actions taken through the AWS Management Console, AWS SDKs, command line tools, and other AWS services.

1.9.2.1.4. This event history simplifies security analysis, resource change tracking, and troubleshooting.

1.9.2.2. Use cases

1.9.2.2.1. Operational Issue Troubleshooting

1.9.2.2.2. Data Exfiltration

1.9.2.2.3. Compliance Aid

1.9.2.2.4. Security Analysis

1.9.2.3. Track user activity and API usage

1.9.2.3.1. CloudTrail is a web service that records AWS API calls for your AWS account and delivers log files to an Amazon S3 bucket.

1.9.2.3.2. The recorded information includes the identity of the user, the start time of the AWS API call, the source IP address, the request parameters, and the response elements returned by the service.

1.9.2.4. Logs Encryption

1.9.2.4.1. By default, the log files delivered by CloudTrail to your bucket are encrypted by Amazon server-side encryption with Amazon S3-managed encryption keys (SSE-S3). To provide a security layer that is directly manageable, you can instead use server-side encryption with AWS KMS–managed keys (SSE-KMS) for your CloudTrail log files.

1.9.2.5. Validating CloudTrail Log File Integrity

1.9.2.5.1. To determine whether a log file was modified, deleted, or unchanged after CloudTrail delivered it, you can use CloudTrail log file integrity validation.

1.9.2.5.2. This feature is built using industry standard algorithms: SHA-256 for hashing and SHA-256 with RSA for digital signing.

1.9.2.5.3. This makes it computationally infeasible to modify, delete or forge CloudTrail log files without detection.

1.9.2.6. Changes to Global Service Event logs can be done via AWS CLI not AWS console

1.9.3. AWS CloudWatch

1.9.3.1. Intro

1.9.3.1.1. Amazon CloudWatch is a monitoring and management service built for developers, system operators, site reliability engineers (SRE), and IT managers.

1.9.3.1.2. CloudWatch provides you with data and actionable insights to monitor your applications, understand and respond to system-wide performance changes, optimize resource utilization, and get a unified view of operational health.

1.9.3.2. How it works

1.9.3.3. Use Cases

1.9.3.3.1. Infrastructure monitoring and troubleshooting

1.9.3.3.2. Resource optimization

1.9.3.3.3. Application monitoring

1.9.3.3.4. Log analytics

1.9.3.4. Alarms

1.9.3.4.1. You can create a CloudWatch alarm that watches a single CloudWatch metric or the result of a math expression based on CloudWatch metrics.

1.9.3.4.2. The alarm performs one or more actions based on the value of the metric or expression relative to a threshold over a number of time periods.

1.9.3.4.3. The action can be an Amazon EC2 action, an Amazon EC2 Auto Scaling action, or a notification sent to an Amazon SNS topic.

1.9.3.5. Events

1.9.3.5.1. Amazon CloudWatch Events delivers a near real-time stream of system events that describe changes in Amazon Web Services (AWS) resources.

1.9.3.5.2. Using simple rules that you can quickly set up, you can match events and route them to one or more target functions or streams.

1.9.3.5.3. CloudWatch Events becomes aware of operational changes as they occur. CloudWatch Events responds to these operational changes and takes corrective action as necessary, by sending messages to respond to the environment, activating functions, making changes, and capturing state information.

1.9.3.5.4. Example

1.9.3.5.5. You can configure the following AWS services as targets for CloudWatch Events:

1.9.3.6. Rules

1.9.3.6.1. A rule matches incoming events and routes them to targets for processing. A single rule can route to multiple targets, all of which are processed in parallel. Rules are not processed in a particular order. This enables different parts of an organization to look for and process the events that are of interest to them. A rule can customize the JSON sent to the target, by passing only certain parts or by overwriting it with a constant.

1.9.3.7. Targets

1.9.3.7.1. A target processes events. Targets can include Amazon EC2 instances, AWS Lambda functions, Kinesis streams, Amazon ECS tasks, Step Functions state machines, Amazon SNS topics, Amazon SQS queues, and built-in targets. A target receives events in JSON format.

1.9.3.8. Metrics

1.9.3.8.1. A metric represents a time-ordered set of data points that are published to CloudWatch. Think of a metric as a variable to monitor, and the data points as representing the values of that variable over time. For example, the CPU usage of a particular EC2 instance is one metric provided by Amazon EC2. The data points themselves can come from any application or business activity from which you collect data.

1.9.3.8.2. AWS services send metrics to CloudWatch, and you can send your own custom metrics to CloudWatch. You can add the data points in any order, and at any rate you choose. You can retrieve statistics about those data points as an ordered set of time-series data.

1.9.3.8.3. Metrics exist only in the region in which they are created.

1.9.4. CloudTrail vs CloudWatch

1.9.4.1. CloudTrail

1.9.4.1.1. CloudTrail is just used to audit changes to services.

1.9.4.1.2. How did an unauthorized user gain access to an S3 bucket?

1.9.4.2. CloudWatch

1.9.4.2.1. CloudWatch is mostly used to monitor operational health and performance,

1.9.4.2.2. Can also provide automation via Rules which respond to state changes

1.9.4.2.3. Examples

1.9.5. Amazon Inspector

1.9.5.1. Amazon Inspector is an automated security assessment service that helps improve the security and compliance of applications deployed on AWS.

1.9.5.2. Amazon Inspector automatically assesses applications for exposure, vulnerabilities, and deviations from best practices.

1.9.5.3. After performing an assessment, Amazon Inspector produces a detailed list of security findings prioritized by level of severity. These findings can be reviewed directly or as part of detailed assessment reports which are available via the Amazon Inspector console or API.

1.9.6. AWS X-Ray

1.9.6.1. Intro

1.9.6.1.1. AWS X-Ray helps developers analyze and debug production, distributed applications, such as those built using a microservices architecture.

1.9.6.1.2. With X-Ray, you can understand how your application and its underlying services are performing to identify and troubleshoot the root cause of performance issues and errors.

1.9.6.1.3. X-Ray provides an end-to-end view of requests as they travel through your application, and shows a map of your application’s underlying components.

1.9.6.1.4. You can use X-Ray to analyze both applications in development and in production, from simple three-tier applications to complex microservices applications consisting of thousands of services.

1.9.6.2. Sampling

1.9.6.2.1. To ensure efficient tracing and provide a representative sample of the requests that your application serves, the X-Ray SDK applies a sampling algorithm to determine which requests get traced.

1.9.6.2.2. By default, the X-Ray SDK records the first request each second, and five percent of any additional requests.

1.9.6.2.3. To avoid incurring service charges when you are getting started, the default sampling rate is conservative. You can configure X-Ray to modify the default sampling rule and configure additional rules that apply sampling based on properties of the service or request.

1.10. Application Integration

1.10.1. SQS

1.10.1.1. Intro

1.10.1.1.1. Amazon SQS is a web service that gives you access to a message queue that can be used to store messages while waiting for a computer to process them.

1.10.1.1.2. Amazon SQS is a distributed queue system that enables web service applications to quickly and reliably queue messages that one component in the application generates to be consumed by another component. A queue is a temporary repository for messages that are awaiting processing.

1.10.1.1.3. Using Amazon SQS, you can decouple the components of an application so they run independently, easing message management between components.

1.10.1.1.4. Any component of a distributed application can store messages in the queue. Messages can contain up to 256 KB of text in any format. Any component can later retrieve the messages programmatically using the Amazon SQS API.

1.10.1.1.5. The queue acts as a buffer between the component producing and saving data, and the component receiving the data for processing. This means the queue resolves issues that arise if the producer is producing work faster than the consumer can process it, or if the producer or consumer are only intermittently connected to the network.

1.10.1.2. Types

1.10.1.2.1. Standard Queues (default)

1.10.1.2.2. FIFO Queues (First-in-First-out)

1.10.1.3. SQS Key Facts

1.10.1.3.1. SQS is pull-based, not pushed-based

1.10.1.3.2. Messages are 256 KB in size

1.10.1.3.3. SQS guarantees that your messages will be processed at least once.

1.10.1.3.4. Messages can be kept in the queue from 1 minute to 14 days

1.10.1.3.5. Default retention period is 4 days

1.10.1.4. SQS Visibility Timeout

1.10.1.4.1. The Visibility Timeout is the amount of time that the message is invisible in the SQS queue after a reader picks up that message.

1.10.1.4.2. Provided the job is processed before the visibility time out expires, the message will then be deleted from the queue.

1.10.1.4.3. If the job is not processed within that time the message will become visible again and another reader will process it. This could result in the same message being delivered twice.

1.10.1.4.4. Default Visibility Timeout is 30 seconds

1.10.1.4.5. Increase it if your task takes >30 seconds

1.10.1.4.6. Maximum is 12 hours

1.10.1.5. Message Group ID

1.10.1.5.1. Message which are part of a specific message group will be processed in a strict orderly manner using Message group ID. This message group ID helps to process messages by multiples consumers in FIFO manner while keeping session data of each trade initiated by users..

1.10.1.6. Deduplication ID

1.10.1.6.1. The message deduplication ID is the token used for deduplication of sent messages.

1.10.1.6.2. If a message with a particular message deduplication ID is sent successfully, any messages sent with the same message deduplication ID are accepted successfully but aren't delivered during the 5-minute deduplication interval.

1.10.1.7. My Exam Tips

1.10.1.7.1. The ideal approach is to scale the instances based on the size of queue.

1.10.1.8. Polling Types

1.10.1.8.1. Long Polling

1.10.1.8.2. Short Polling

1.10.1.9. Exam Tips

1.10.1.9.1. SQS is a distributed message queueing system

1.10.1.9.2. Allows you to decouple the components of an application so that they are independent

1.10.1.9.3. Pull-based, not push-based

1.10.1.9.4. Standard Queues (default) - best-effort ordering; message delivered at least once

1.10.1.9.5. FIFO Queues (First In First Out) - ordering strictly preserved, message delivered once, no duplicates. e.g. good for banking transactions which need to happen in strict order

1.10.2. SWF

1.10.2.1. Intro

1.10.2.1.1. Amazon Simple Workflow Service (Amazon SWF) is a web service that makes it easy to coordinate work across distributed application components.

1.10.2.1.2. Amazon SWF enables applications for a range of use cases, including

1.10.2.1.3. Tasks represent invocations of various processing steps in an application which can be performed by executable code, web service calls, human actions, and scripts.

1.10.2.1.4. Maximum Workflow can be 1 year and the value is always measured in seconds.

1.10.2.2. SWF Workers

1.10.2.2.1. Workers are programs that interact with Amazon SWF to get tasks, process received tasks, and return the results.

1.10.2.3. SWF Decider

1.10.2.3.1. The decider is a program that controls the coordination of tasks, i.e. their ordering, concurrency and scheduling according to the application logic.

1.10.2.4. SWF Workers & Deciders

1.10.2.4.1. The workers and the decider can run on cloud infrastructure, such as Amazon EC2, or on machines behind firewalls. Amazon SWF brokers the interactions between workers and the decider. It allows the decider to get consistent views into the progress of tasks and to initiate new tasks in an ongoing mannen.

1.10.2.4.2. At the same time, Amazon SWF stores tasks, assigns them to workers when they are ready, and monitors their progress. It ensures that a task is assigned only once and is never duplicated. Since Amazon SWF maintains the application's state durably, workers and deciders don't have to keep track of execution state. They can run independently, and scale quickly.

1.10.2.5. SWF Domains

1.10.2.5.1. Your workflow and activity types and the workflow execution itself are all scoped to a domain.

1.10.2.5.2. Domains isolate a set of types, executions, and task lists from others within the same account.

1.10.2.5.3. You can register a domain by using the AWS Management Console or by using the RegisterDomain action in the Amazon SWF API.

1.10.2.6. SWF vs SQS

1.10.2.6.1. Amazon SWF presents a task-oriented API, whereas Amazon SQS offers a message-oriented API.

1.10.2.6.2. Amazon SWF ensures that a task is assigned only once and is never duplicated. With Amazon SQS, you need to handle duplicated messages and may also need to ensure that a message is processed only once.

1.10.2.6.3. Amazon SWF keeps track of all the tasks and events in an application. With Amazon SQS, you need to implement your own application-level tracking, especially if your application uses multiple queues.

1.10.3. SNS

1.10.3.1. Intro

1.10.3.1.1. Amazon Simple Notification Service (Amazon SNS) is a web service that makes it easy to set up, operate, and send notifications from the cloud.

1.10.3.1.2. It provides developers with a highly scalable, flexible, and cost-effective capability to publish messages from an application and immediately deliver them to subscribers or other applications.

1.10.3.1.3. Push Provides instantaneous, push-based delivery (no polling) notifications to

1.10.3.1.4. Amazon SNS can also deliver notifications by SMS text message or email, to

1.10.3.2. SNS vs SQS

1.10.3.2.1. Both Messaging Services in AWS

1.10.3.2.2. SNS - Push

1.10.3.2.3. SQS - Polls (Pulls)

1.10.3.3. SNS Pricing

1.10.3.3.1. Users pay $0.50 per 1 million Amazon SNS

1.10.3.3.2. $0.06 per 100,000 Notification deliveries over HTTP

1.10.3.3.3. $0.75 per 100 Notification deliveries over SMS

1.10.3.3.4. $2.00 per 100,000 Notification deliveries over Email

1.10.3.4. SNS Topics

1.10.3.4.1. Amazon SNS provides topics for high-throughput, push-based, many-to-many messaging. Using Amazon SNS topics, your publisher systems can fan out messages to a large number of subscriber endpoints for parallel processing

1.10.3.4.2. SNS allows you to group multiple recipients using topics.

1.10.3.4.3. A topic is an "access point" for allowing recipients to dynamically subscribe for identical copies of the same notification.

1.10.3.4.4. One topic can support deliveries to multiple endpoint types for example, you can group together iOS, Android and SMS recipients.

1.10.3.4.5. When you publish once to a topic, SNS delivers appropriately formatted copies of your message to each subscriber.

1.10.3.4.6. To prevent messages from being lost, all messages published to Amazon SNS are stored redundantly across multiple availability zones.

1.11. Developer Tools

1.11.1. AWS CodeBuild

1.11.1.1. AWS CodeBuild is a fully managed CONTINUOUS INTEGRATION service that compiles source code, runs tests, and produces software packages that are ready to deploy.

1.11.1.2. With CodeBuild, you don’t need to provision, manage, and scale your own build servers.