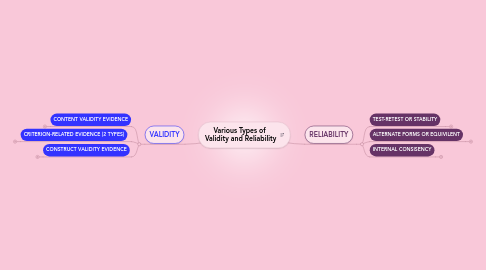

1. VALIDITY

1.1. CONTENT VALIDITY EVIDENCE

1.1.1. Significance in learning and assessment: Examines test questions to distinguish whether they correspond to what the user decides should be covered by the test.

1.2. CRITERION-RELATED EVIDENCE (2 TYPES)

1.2.1. Significance of Concurrent criterion-related evidence

1.2.1.1. measures numeric values which is merely a demonstration of parallel coefficient. For example, some college students believe they could save time and money by scoring high on a College -Level Examination Program (CLEP), and still receive the same amount of credits, instead of sitting through the course.

1.2.2. Significance of Predictive Validity Evidence

1.2.2.1. predominantly functional and important for potential "skill" tests as it predicts future behavior of examinees. For example, grades of students who take on SAT II are examined. Students' grades are measured as academic performance and weighed against other students to distinguish whether or not they can handle their "college" curriculum.

1.3. CONSTRUCT VALIDITY EVIDENCE

1.3.1. Significance of learning and assessment: validity is measured when tests assess the underlying construct is suppose to measure; "logical explanation or rationale that can account for the interrelationships among a set of variables" (Borich & Kubiszyn, 2010.). For example: If students are learning a lesson in basic algebra relating to arithmetic expressions and formulas, an interrelated variable may include testing the academic

2. RELIABILITY

2.1. TEST-RETEST OR STABILITY

2.1.1. When the same test is administered in two different occasions.

2.1.1.1. Significance in learning and assessment

2.1.1.1.1. Results from both test gives test makers or administrators the prospect on test reliability by observing the correlation between scores.

2.2. ALTERNATE FORMS OR EQUIVILENT

2.2.1. Students are administered two different version of corresponding tests.

2.2.1.1. Significance in learning and assessment

2.2.1.1.1. Teachers will be able to observe how student's scores are correlated within the interval of time.

2.3. INTERNAL CONSISENCY

2.3.1. Test premeditated to assess a particular concept; items must be interrelated with each other therefore assessment much be internally consistent. For example: Basic Math (addition, subtraction, multiplication, or division).

2.3.1.1. Split-half Methods

2.3.1.1.1. Referred to as the odd-even reliability, this approach is when a test items within the test split in half (odd and even numbers), and the scores are then correlated.

2.3.1.2. Kuder-Richardson

2.3.1.2.1. "measures the extent to which items within on form of the test have as much in common with one another as do the items in that one from with corresponding items in an equivalent form" (Borich & Kubiszyn, 2010, pg. 344).