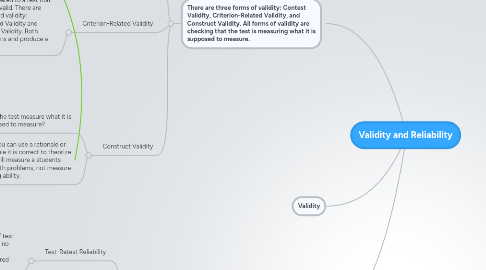

1. There are three forms of validity: Contest Validity, Criterion-Related Validity, and Construct Validity. All forms of validity are checking that the test is measuring what it is supposed to measure.

1.1. Content Validity

1.1.1. Do the test items match the instructional objectives?

1.1.2. Evidence can not be found using an equation and does not produce a numerical value.

1.2. Criterion-Related Validity

1.2.1. Scores from a test are compared to a test that has already been termed as valid. There are two types of criterion-related validity: Concurrent Criterion-Related Validity and Predictive Criterion-Related Validity. Both types of validity use equations and produce a numerical value.

1.2.1.1. Concurrent Criterion-related validity

1.2.1.1.1. How valid is a test when compared to an already proven to be valid test.?

1.2.1.1.2. Determined by comparing test scores with test scores from a valid test. Test are administered to the same set of students and with in a relatively short period of time.

1.2.1.2. Predictive Criterion-Related Validity

1.2.1.2.1. How well does the test predict future behavior of test takers?

1.2.1.2.2. Procedure used is to administer the test that is supposed to predict a future outcome of behavior, wait a period of time (varies based by what test id trying to predict), and, after established wait time, measure students on predicted outcome or behavior.

1.3. Construct Validity

1.3.1. Does the test measure what it is supposed to measure?

1.3.2. To measure this you can use a rationale or theory. For example it is correct to theorize that a math test will measure a students ability to solve math problems, not measure a students reading ability.

2. Validity

3. Reliability

3.1. There are three forms of reliability: Test-Retest Reliability, Alternate Forms, Internal Consistency. All forms of reliability measure if the test score remain reasonably unchanged through multiple administrations (assuming the item or subject being measured has not changed).

3.1.1. Test-Retest Reliability

3.1.1.1. Test is administered to a small group of test takers, a short wait period is given with no further instruction or review of tested material, and then the test is administered again. Test scores are compared.

3.1.1.1.1. Caution: Longer wait time between tests = lower re-test estimates.

3.1.2. Alternate forms

3.1.2.1. Two alternative or equivalent forms of a test are given to a small group of test takers. Very short waiting period is used (ex. One test in the morning and second test in the afternoon). Test scores are compared.

3.1.3. Internal Consistency

3.1.3.1. Two forms of internal reliability: split-half or odd even and item-total correlations. Should only be used when testing measures a single or unified attribute.

3.1.3.1.1. Split-half or odd-even is when you divide a test in half (either exactly in half or by using odds vs evens), score each half separately and then compare two test scores.

3.1.3.1.2. Kuder Richardson Method (aka item-total correlations) compares items within a test to other items in the same test.